- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

Expedition csv logs stuck in pending

- LIVEcommunity

- Tools

- Expedition

- Expedition Discussions

- Re: SCP permissions or Re: Expedition csv logs stuck in pending

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

Expedition csv logs stuck in pending

- Mark as New

- Subscribe to RSS Feed

- Permalink

06-26-2018 07:43 AM - edited 06-27-2018 07:55 AM

Hi everyone,

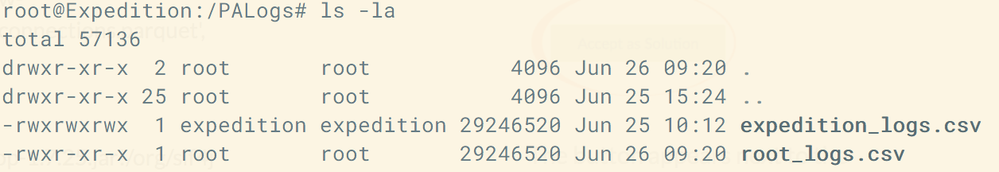

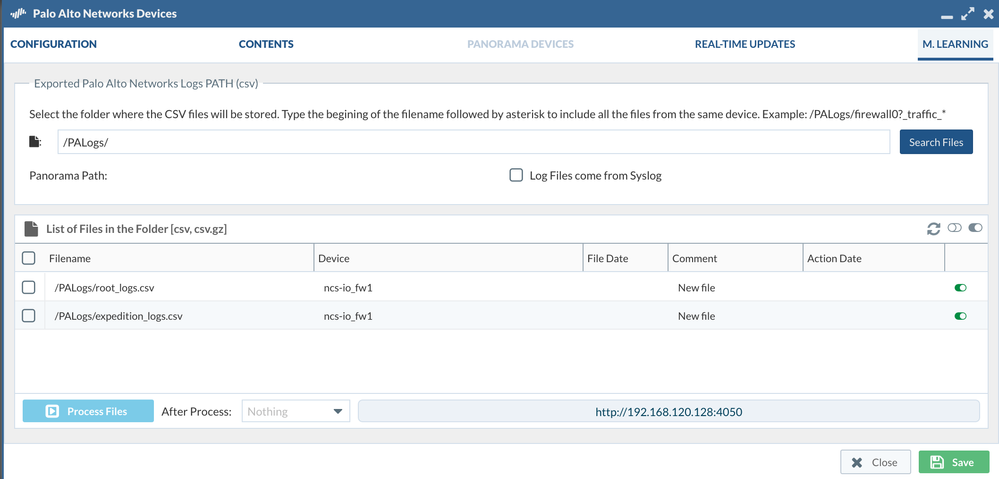

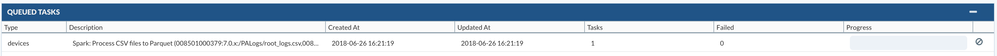

I have added firewall logs from our Palo Alto 5000 series to the Expedition VM /PALogs . I have copied the orginal .csv as a duplicate with root as the owner and the original with expedition as the owner. Both files appear in Devices > M.LEARNING. When I run Process Files the job remains in pending and nothing happens. Any ideas what the issue may be?

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-08-2018 06:05 AM

Hi,

be sure your Expedition has DNS and default gateway correctly configured, or in case you need to use a proxy check the proxy is correctly configured.

If a Palo Alto Networks firewall is before your Internet connection make sure paloalto-updates app-id is allowed to your expedition instance.

If you have dns and default gateway and you can reach internet and paloalto-updates is allowed and still has problems please send us an email to fwmigrate at paloaltonetworks dot com to arrange a zoom session with us.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-13-2018 02:20 PM

got it....it was a firewall issue. Thank you Alestevez.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09-04-2018 07:59 AM

Thanks to Albert, we have a fix. Modify the file /etc/mysql/my.cnf and comment out the line that binds the localhost address to mysql:

bind-address = 127.0.0.1. --->? #bind-address = 127.0.0.1.

restart services.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 05:00 AM

Folks-

In my case I changed the owner so I could SCP the firewall logs to Expedition as I was getting a permissions failure. All worked as expected and I can see the file on Expedition in the PALogs directory from my SSH shell.

However the file is never visible via the web interface so it cannot be processed.

I updated Expedition to the latest release but when I do this I get a reconnect mysql error even after editing the file to change reconnect to ON (see https://live.paloaltonetworks.com/t5/Expedition-Articles/Modifying-mysqli-reconnect-value/ta-p/21922... Still do not see the file in the web interface.

Any ideas?

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 09:32 AM

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 09:49 AM

The file a sent directly by the firewall and this is the serial number configured in Expedition. Panorama is not involved.

To get SCP to work I nee dto change oswer to expedition. Files then transfer without an issue but the files never show up under M.Learning to process via the Web interface (files are on Expedition and visiable via SSH shell).

Rich

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 10:11 AM

If you are certain that the serial number is correct, then I would suggest to check the following.

- The file is readbale by www-data.

www-data needs to have rights to read the file (does not need to own it) and it should have rights to reach the enclosing folder where the file is located. This means, if the file is in /my/path/last_day.csv, www-data should have rights to get into /my/path. Verify that this is the case.

This is why I normally suggest to place the logs into /PALogs, as it is becomes easy to see that expedition has writing rights into the folder, and www-data has rights to access it and read the files inside. - The provided path is correct.

Following the example above with a file in /my/path/last_day.csv, make sure that you provide the path to search with, for instance

/my/path/*

Make sure there is no spaces in the given path, and once you have seen that the path is correct, do not forget to click on the "Save" button, so Expedition remembers the path for future checks. - The log has content.

If the logs sent by the firewall do not have any content, Expedition can't verify that the file actually belongs to the firewall with the given serial number.

When could this happen?

If you have a couple of FW in HA, you may have switch from primary to secondary without being aware of it. In that case, the primary FW (assuming it is the one that was configured to send the logs) is sending empty traffic logs, as it is the secondary the one processing traffic.

Make sure to set up both primary and secondary FW to send the traffic logs to Expedition (we suggest to send the logs to the same folder), and make use of the HA serial field to provide the serial number of the secondary FW. We can handle both FW data if their serials are provided. - The logs have a csv or gz extension.

Expedition is capable of processing the traffic logs when stored in coma-separated-values (by default) or even if the files have been compressed (gz would reduce the size of the file to a 10% of the original size). However, if you 7z the file or change the extension, we won't consider the file for processing.

I hope some of those points help.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 10:29 AM

There is only a single firewall involved. SCP from the firewall does not work unless go into the CLI and change the /PALogs owner in Expedition to expedition.

Right now under Settings the Temporary Data Structure Folder is set to /opt/ml (this is the ova install default). Do I need to change this to /PALogs files show up with thr web interface?

Thank you, Rich

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 10:39 AM

The Temporary Data Structure Folder is used for conversion, which will come after you have managed to "find" the original CSV files.

In the main screen at Expedition, you have health checks. One of them refers to the Temporary Data Structure folder and the rights to write inside. If the check passes, then you do not need to make changes on your /opt/ml folder (unless you prefer a different folder due to space limitations).

Going back to the CSV files that can't be found, and located inside /PALogs, most probably you removed the rights for www-data to read that folder. Simply execute:

sudo chown expedition.www-data /PALogs

and later

sudo chmod -r 740 /PALogs

This will make expedition user the owner of the folder, and www-data group (which contains www-data user) the group owner of the folder. After, www-data group will have read rights into the folder, and expedition will have write-read-execute rights. If you would prefer, you can use 770 instead of 740 to give also write rights to www-data, in order to be able to compress the files after processing or delete them (those are options when processing csv files in Expedition)

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 11:04 AM

Done and same issue.

expedition@pan-expedition:/PALogs$ ls -al

total 16

drwxr----- 2 expedition www-data 4096 Dec 31 11:45 .

drwxr-xr-x 24 root root 4096 Dec 28 11:50 ..

-rw-rw-r-- 1 expedition expedition 944 Dec 31 13:00 pan-panos-vm50_traffic_2018_12_31_last_calendar_day.csv

-rw-rw-r-- 1 expedition expedition 17 Dec 31 12:55 ssh-export-test.txt

Dashboard is clean: no errors to remediate. System looks good just cannot get files to show up under web interface to process.

Rich

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 11:24 AM

TL;DR

One more thing, in the ML Settings, make sure the provided IP is the correct one.

Long Explanation

Even it may sound strange, we desinged Expedition to allow being split into two parts, the config management part, and the Machine Learning part.

In most cases (maybe 99%), both parts are the same machine. However, the management part needs to know how to reach the Machine Learning part for finding the CSV logs, converting them into parquets, performing data analytics to generate rules, etc.

Why do we desgined it this way? We had on mind that some users may require a very performing unit for data analytics, for instance with 24 CPU and 256GB of RAM. Maybe, they even have a cluster for processing Spark jobs (whic we use for Machine Learning). In that case, we started the desing to untangle Expedition into a heavy part (that could be shared with other projects and perform the data analytics) and a light part that handles configurations and rest of Expedition features.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-31-2018 12:06 PM

it is- 192.168.55.120 (default IP address for LITB Expedition). Rich

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-09-2019 03:23 AM

If it still does not resolve the issue, please send us an email to fwmigrate at paloaltonetworks dot com, and we may try to do a live session to help you.

- Mark as New

- Subscribe to RSS Feed

- Permalink

05-15-2019 01:31 PM

you didn't by chance fill up your drive on the expedition vm did you?

- 25086 Views

- 51 replies

- 0 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- Expedition M.Learning Log Analysis "No supported new files to process" in Expedition Discussions

- Verify that Expedition will be able to compress/delete CSV logs reported by "expedition" user. in Expedition Discussions

- Expedition Tool - default user for WebGUI is incorrect in Expedition Discussions

- Expedition SRX to Palo Alto pending in Expedition Discussions

- Cannot login to Expedition gui using default admin/paloalto after ovf transfer and ip change in Expedition Discussions