- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

Dataplane higher than usual. why??

- LIVEcommunity

- Discussions

- General Topics

- Dataplane higher than usual. why??

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

03-16-2018 06:03 AM

Hi,

We realised that the PA5050 (panos 7.1.12) dataplane has increased to 55% when it is always is at 28%. I would like to know why this increase is caused. I dont know how to translate this commands in order to have an idea about why is high the dataplane.

show running resource-monitor

DP dp0:

Resource monitoring sampling data (per second):

CPU load sampling by group:

flow_lookup : 36%

flow_fastpath : 34%

flow_slowpath : 36%

flow_forwarding : 36%

flow_mgmt : 20%

flow_ctrl : 28%

nac_result : 38%

flow_np : 34%

dfa_result : 38%

module_internal : 36%

aho_result : 40%

zip_result : 38%

pktlog_forwarding : 28%

lwm : 0%

flow_host : 37%

CPU load (%) during last 60 seconds:

core 0 1 2 3 4 5 6 7 8 9 10 11

* 32 41 48 48 57 58 58 58 57 57 40

* 21 28 32 33 38 40 40 40 39 39 28

* 18 27 30 30 36 37 37 37 37 37 26

* 21 29 35 34 43 43 42 42 42 42 29

* 22 30 36 36 45 45 45 45 45 44 30

* 20 28 34 35 42 42 43 42 42 42 28

* 19 27 33 33 40 41 40 40 40 40 27

* 20 29 34 34 42 44 42 42 41 41 28

* 21 29 36 36 45 46 46 45 45 45 29

* 20 28 35 34 43 44 43 43 43 42 27

* 15 23 26 26 32 32 32 32 32 32 22

* 18 27 31 31 38 38 38 38 38 38 26

* 25 33 40 39 49 50 49 50 49 49 32

* 22 29 35 35 45 45 45 45 45 44 29

* 20 27 33 34 43 42 42 41 43 42 26

* 18 27 31 31 39 39 38 38 39 39 26

* 19 27 32 33 40 40 40 40 39 40 27

* 22 30 36 36 44 45 45 45 44 44 30

* 21 29 35 35 42 42 43 42 42 42 28

* 20 28 34 34 41 40 42 40 41 41 27

* 21 29 34 35 42 42 43 42 42 42 28

* 21 29 34 34 42 42 43 43 42 42 28

* 19 27 34 33 41 41 42 41 41 41 27

* 19 27 33 33 40 42 41 40 41 41 27

* 18 26 32 32 39 40 40 39 40 40 26

* 19 27 33 33 41 41 42 41 40 40 27

* 18 26 30 30 37 37 37 36 37 36 25

* 24 32 36 37 44 43 44 43 44 43 32

lines 1-52 * 29 37 42 43 51 51 51 51 51 52 36

* 25 33 38 39 47 47 47 48 47 48 32

* 23 31 36 37 44 45 45 44 45 45 30

* 23 31 36 37 44 44 45 44 45 45 30

* 25 34 41 41 50 49 49 49 49 50 33

* 26 35 43 42 52 52 52 52 52 51 35

* 26 34 41 41 49 49 51 49 50 49 34

* 18 27 29 29 34 34 35 34 34 34 26

* 25 33 38 39 45 45 45 45 44 45 34

* 33 42 49 49 58 58 58 58 58 57 42

* 30 38 46 45 55 54 55 55 55 54 39

* 22 30 35 35 42 42 42 42 41 42 30

* 16 25 28 27 33 33 34 33 33 33 24

* 24 33 40 39 49 47 48 47 48 48 32

* 29 38 45 45 54 53 54 54 53 54 38

* 22 30 35 36 43 42 42 43 42 42 30

* 22 30 36 36 44 45 44 45 44 43 30

* 26 34 39 39 46 47 46 47 47 46 34

* 27 36 41 42 49 49 48 49 49 49 36

* 25 33 38 38 45 45 45 45 45 45 33

* 26 35 38 38 45 44 45 45 44 45 35

* 33 41 46 45 53 53 53 53 52 53 40

* 32 40 47 46 54 54 55 54 54 54 40

* 29 38 44 44 52 52 53 52 52 52 37

* 29 37 44 43 51 52 52 52 52 52 36

* 28 37 42 42 50 51 51 51 50 50 35

* 30 38 43 43 51 50 50 51 50 50 37

* 33 40 45 46 53 53 54 53 53 52 40

* 31 39 45 44 51 52 53 53 52 52 38

* 32 41 46 46 53 54 54 53 53 54 39

* 29 38 43 44 51 50 51 50 51 50 37

* 28 37 43 43 52 51 51 51 52 51 37

Resource utilization (%) during last 60 seconds:

session:

10 10 10 10 10 10 10 10 10 10 10 10 10 10 10

10 10 10 10 10 10 10 10 10 10 10 10 10 10 10

10 10 10 10 10 10 10 10 10 10 10 10 10 10 10

10 10 10 10 10 10 10 10 10 10 10 10 10 10 10

packet buffer:

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

packet descriptor:

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

lines 53-104 packet descriptor (on-chip):

2 2 1 1 2 2 1 1 1 2 2 1 2 1 1

2 2 1 2 2 2 2 2 2 2 2 1 2 1 1

1 2 2 1 2 2 2 2 2 1 1 2 2 2 2

1 2 1 1 1 1 2 2 2 2 2 2 2 2 2

Resource monitoring sampling data (per minute):

CPU load (%) during last 60 minutes:

core 0 1 2 3 4 5 6 7

avg max avg max avg max avg max avg max avg max avg max avg max

* * 29 40 37 47 43 55 42 55 50 63 50 63 50 63

* * 27 36 36 43 40 51 40 51 47 59 47 59 47 58

* * 28 36 37 45 43 53 43 53 50 62 50 61 50 61

* * 23 29 31 38 36 44 36 44 43 52 44 52 44 52

* * 22 31 30 39 35 44 35 44 43 53 43 52 43 52

* * 21 27 29 35 35 42 35 43 43 51 43 52 43 52

* * 22 28 30 37 37 48 37 47 45 57 45 57 45 57

* * 22 29 29 36 35 44 35 44 42 52 42 52 42 52

* * 21 32 29 41 36 48 36 49 44 57 44 58 44 58

* * 21 26 29 34 36 43 36 42 44 51 44 52 43 51

* * 23 31 31 39 39 47 39 47 47 57 47 56 47 56

* * 21 31 29 38 35 44 35 44 43 53 43 54 43 53

* * 23 32 31 39 39 50 39 50 47 59 47 59 47 59

* * 23 32 31 39 39 50 39 49 47 58 47 59 47 57

* * 21 27 29 36 37 45 37 45 45 54 45 55 45 55

* * 21 30 31 41 37 48 37 47 44 55 44 55 44 55

* * 21 29 31 39 36 48 36 47 44 56 44 56 44 56

* * 22 29 32 40 38 46 38 47 45 56 45 56 45 55

* * 24 33 33 42 40 50 39 50 47 59 47 59 48 59

* * 23 32 32 41 40 51 40 52 49 61 49 61 49 62

* * 22 26 32 37 39 45 40 45 49 56 49 56 49 55

* * 23 28 33 37 41 46 41 47 50 56 50 57 50 55

* * 24 33 34 42 43 53 43 53 53 64 53 63 53 63

* * 24 29 33 39 42 51 42 51 51 60 51 61 51 61

* * 24 31 33 40 43 52 43 52 51 62 52 62 51 62

* * 21 27 30 37 39 49 39 49 48 59 48 59 48 59

* * 21 31 30 39 38 48 38 47 47 57 47 57 47 57

* * 24 32 33 42 41 54 41 53 50 61 50 62 49 61

* * 23 30 32 40 39 49 39 48 48 58 48 58 48 57

* * 23 29 31 37 38 47 38 45 46 55 46 55 46 56

* * 20 28 27 36 33 40 33 40 41 47 41 48 41 48

* * 21 28 29 37 37 47 37 46 45 56 45 57 45 56

* * 19 25 27 33 34 41 34 41 42 50 42 50 42 50

* * 18 26 26 35 32 42 32 42 39 53 40 52 40 52

* * 18 24 26 33 33 42 33 40 41 50 41 51 41 50

* * 20 26 28 34 35 43 35 43 43 52 43 52 43 53

* * 19 30 26 37 33 45 33 44 41 51 41 52 41 52

* * 18 28 25 36 32 44 32 44 40 52 40 53 40 53

* * 17 25 25 34 31 42 31 42 38 51 39 51 38 51

* * 18 22 25 31 31 40 31 39 39 48 39 48 39 48

lines 105-156 * * 18 28 26 36 32 44 32 44 40 52 40 51 40 52

* * 20 25 27 33 33 42 33 42 41 51 41 51 41 51

* * 20 27 28 35 34 41 34 41 42 49 42 49 42 49

* * 19 28 28 37 34 46 34 45 42 55 42 56 42 56

* * 22 30 31 38 38 45 38 45 47 56 47 56 47 56

* * 22 29 32 39 39 48 39 47 48 58 48 58 48 57

* * 24 31 33 41 40 50 40 50 49 61 49 60 49 60

* * 27 39 36 47 43 56 43 55 52 66 52 66 52 66

* * 27 53 36 59 42 66 42 66 50 72 50 73 50 72

* * 26 34 35 43 40 49 40 49 49 59 49 59 49 59

* * 25 34 34 43 40 50 40 51 48 59 48 58 48 59

* * 23 29 32 39 37 46 37 45 45 55 45 55 45 54

* * 24 29 32 39 38 46 38 45 46 56 46 56 46 55

* * 24 31 32 40 38 48 38 48 46 57 46 57 46 57

* * 23 30 32 39 38 47 38 46 46 55 47 56 47 56

* * 23 31 32 40 38 49 37 49 45 59 45 59 45 59

* * 23 36 32 43 37 52 37 52 44 61 44 61 44 60

* * 25 33 34 42 41 51 41 52 49 61 49 61 48 60

* * 25 34 34 43 40 52 40 51 48 59 48 60 48 60

* * 27 36 36 45 41 50 41 50 49 58 49 58 49 58

core 8 9 10 11

avg max avg max avg max avg max

50 63 50 64 50 64 36 47

47 57 47 58 47 58 35 43

50 61 50 60 50 61 36 44

43 52 43 51 43 52 30 38

43 52 43 52 43 52 29 38

42 51 43 52 43 51 29 35

45 57 44 57 44 56 29 37

42 52 42 52 42 52 28 35

43 57 43 57 44 57 29 40

43 52 43 52 43 51 28 34

46 56 46 56 46 55 31 39

43 54 42 53 43 54 28 37

47 58 47 60 46 59 31 39

47 58 47 58 47 58 30 39

44 54 44 55 44 55 29 35

44 54 44 55 43 54 30 41

43 56 43 56 43 56 30 38

45 55 45 55 45 55 31 40

47 58 47 59 47 59 32 42

48 61 48 61 48 61 32 42

48 55 49 55 48 55 31 36

50 56 50 56 50 56 33 36

52 63 52 63 52 63 33 41

51 60 51 60 51 60 32 40

51 61 51 62 51 61 33 40

47 59 47 58 47 58 30 37

47 58 47 58 47 57 29 38

49 61 49 61 49 61 32 42

47 58 47 58 47 57 31 39

46 54 46 55 46 55 30 37

lines 157-208 41 48 41 48 41 48 27 34

45 55 45 55 45 56 29 36

42 50 42 49 42 49 27 33

39 52 39 52 39 52 25 36

40 50 40 50 40 50 25 32

43 52 43 52 43 53 27 34

40 52 40 52 40 51 26 36

39 52 39 53 39 52 25 35

38 50 38 50 38 51 25 33

39 48 39 47 39 48 24 31

40 51 40 51 40 52 25 35

41 51 41 50 41 51 27 33

42 49 42 49 42 49 28 35

42 55 42 55 42 56 27 38

46 55 46 55 46 55 30 39

48 57 47 57 48 57 31 38

49 60 49 60 49 59 33 41

52 65 52 65 52 65 36 46

50 73 50 73 50 73 36 58

48 59 48 59 48 58 34 42

48 58 48 59 48 58 34 43

45 55 45 54 45 55 31 38

45 55 45 55 45 55 32 38

45 57 45 57 45 56 32 41

46 55 46 55 46 55 32 39

45 57 45 58 45 58 31 40

44 60 44 60 44 60 31 42

49 60 48 61 48 60 33 42

47 60 47 60 47 59 33 43

48 58 48 58 48 57 35 45

Resource utilization (%) during last 60 minutes:

session (average):

10 10 10 10 9 10 9 9 9 10 9 9 10 9 10

10 10 10 10 10 10 10 10 10 10 10 10 10 9 9

9 9 9 9 9 9 9 9 9 9 9 9 9 10 10

10 10 10 11 10 10 10 10 11 11 10 10 11 10 10

session (maximum):

10 10 10 10 10 10 10 9 10 10 10 10 10 10 10

13 13 13 13 12 12 11 10 10 10 10 10 10 10 9

10 10 9 9 9 9 9 9 9 9 9 10 10 10 10

11 11 11 11 11 11 11 11 11 11 11 11 11 10 10

packet buffer (average):

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

packet buffer (maximum):

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

lines 209-260 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

packet descriptor (average):

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

packet descriptor (maximum):

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

packet descriptor (on-chip) (average):

2 2 2 2 2 1 2 2 2 2 2 2 2 2 2

2 2 2 2 2 2 2 2 2 2 2 2 2 2 2

2 2 1 1 1 1 1 2 1 1 2 1 1 1 2

2 2 2 2 2 1 2 2 2 1 2 2 2 2 2

packet descriptor (on-chip) (maximum):

2 2 2 2 2 2 2 2 2 2 2 2 2 2 4

2 2 2 3 2 2 2 2 2 2 2 2 2 3 3

2 2 2 2 2 2 2 2 2 2 2 2 2 2 2

2 2 2 2 2 2 2 2 2 2 3 2 2 2 2

Accepted Solutions

- Mark as New

- Subscribe to RSS Feed

- Permalink

03-19-2018 05:37 AM

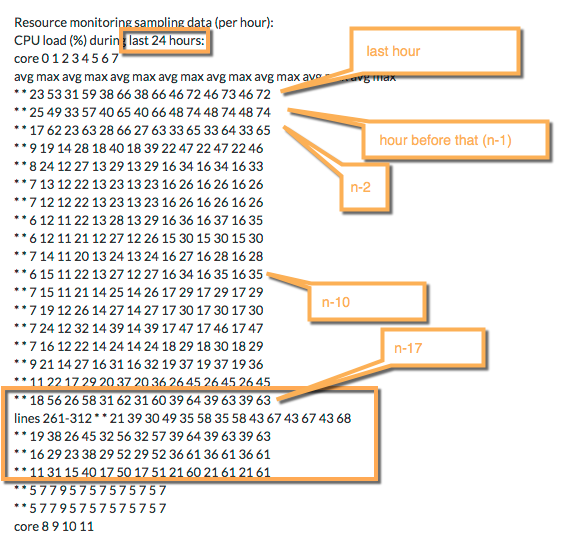

the 24hour table of the resource monitoring

PANgurus - Strata & Prisma Access specialist

- Mark as New

- Subscribe to RSS Feed

- Permalink

03-16-2018 06:04 AM

Resource monitoring sampling data (per hour):

CPU load (%) during last 24 hours:

core 0 1 2 3 4 5 6 7

avg max avg max avg max avg max avg max avg max avg max avg max

* * 23 53 31 59 38 66 38 66 46 72 46 73 46 72

* * 25 49 33 57 40 65 40 66 48 74 48 74 48 74

* * 17 62 23 63 28 66 27 63 33 65 33 64 33 65

* * 9 19 14 28 18 40 18 39 22 47 22 47 22 46

* * 8 24 12 27 13 29 13 29 16 34 16 34 16 33

* * 7 13 12 22 13 23 13 23 16 26 16 26 16 26

* * 7 12 12 22 13 23 13 23 16 26 16 26 16 26

* * 6 12 11 22 13 28 13 29 16 36 16 37 16 35

* * 6 12 11 21 12 27 12 26 15 30 15 30 15 30

* * 7 14 11 20 13 24 13 24 16 27 16 28 16 28

* * 6 15 11 22 13 27 12 27 16 34 16 35 16 35

* * 7 15 11 21 14 25 14 26 17 29 17 29 17 29

* * 7 19 12 26 14 27 14 27 17 30 17 30 17 30

* * 7 24 12 32 14 39 14 39 17 47 17 46 17 47

* * 7 16 12 22 14 24 14 24 18 29 18 30 18 29

* * 9 21 14 27 16 31 16 32 19 37 19 37 19 36

* * 11 22 17 29 20 37 20 36 26 45 26 45 26 45

* * 18 56 26 58 31 62 31 60 39 64 39 63 39 63

lines 261-312 * * 21 39 30 49 35 58 35 58 43 67 43 67 43 68

* * 19 38 26 45 32 56 32 57 39 64 39 63 39 63

* * 16 29 23 38 29 52 29 52 36 61 36 61 36 61

* * 11 31 15 40 17 50 17 51 21 60 21 61 21 61

* * 5 7 7 9 5 7 5 7 5 7 5 7 5 7

* * 5 7 7 9 5 7 5 7 5 7 5 7 5 7

core 8 9 10 11

avg max avg max avg max avg max

46 73 45 73 45 73 31 58

47 74 47 74 47 74 32 55

33 65 33 64 33 65 22 55

22 46 22 47 22 47 13 27

16 33 16 34 16 33 11 26

16 27 16 26 16 26 11 22

16 26 16 27 16 26 11 22

16 37 16 36 16 34 11 23

15 29 15 29 15 30 10 21

16 27 16 28 16 27 11 21

15 35 15 34 15 34 10 22

17 29 17 29 17 29 11 21

17 30 17 30 17 30 11 26

17 47 17 47 17 46 11 31

18 29 18 29 18 29 11 23

19 37 19 36 19 36 13 26

25 45 25 44 25 45 16 28

38 63 38 63 38 62 25 42

43 67 43 66 43 66 29 48

39 63 39 63 39 62 26 44

36 61 36 61 36 62 23 37

21 61 21 60 21 60 14 41

5 7 5 7 5 7 5 7

5 7 5 7 5 7 5 7

Resource utilization (%) during last 24 hours:

session (average):

10 9 7 5 5 5 5 5 5 5 5 5 5 5 5

5 7 9 10 9 9 10 11 10

session (maximum):

13 13 12 9 8 9 9 9 9 8 8 8 8 9 9

9 12 13 13 12 12 12 12 12

packet buffer (average):

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0

packet buffer (maximum):

0 1 1 0 0 0 0 0 0 0 0 0 0 0 0

0 0 1 0 0 0 1 0 0

packet descriptor (average):

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0

packet descriptor (maximum):

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0

lines 313-364 packet descriptor (on-chip) (average):

2 2 2 1 1 1 1 1 1 1 1 1 1 1 1

1 1 2 2 1 1 1 1 1

packet descriptor (on-chip) (maximum):

3 14 19 2 2 3 2 2 2 3 3 3 2 3 2

3 4 26 4 7 3 17 2 2

Resource monitoring sampling data (per day):

CPU load (%) during last 7 days:

core 0 1 2 3 4 5 6 7

avg max avg max avg max avg max avg max avg max avg max avg max

* * 7 56 11 58 12 62 12 60 14 67 14 67 14 68

* * 3 5 4 7 2 5 2 5 2 5 2 5 2 5

* * 3 9 4 10 2 8 2 8 2 8 2 8 2 8

* * 3 7 4 9 2 7 2 7 2 7 2 7 2 7

* * 2 3 3 5 1 3 1 3 1 3 1 3 1 3

* * 2 4 3 5 1 3 1 3 1 3 1 3 1 3

* * 3 7 4 9 2 7 2 7 2 7 2 7 2 7

core 8 9 10 11

avg max avg max avg max avg max

14 67 14 66 14 66 10 48

2 5 2 5 2 5 2 5

2 8 2 8 2 8 2 8

2 7 2 7 2 7 2 7

1 3 1 3 1 3 1 3

1 3 1 3 1 3 1 3

2 7 2 7 2 7 2 7

Resource utilization (%) during last 7 days:

session (average):

7 5 6 5 3 3 5

session (maximum):

13 7 11 10 4 5 10

packet buffer (average):

0 0 0 0 0 0 0

packet buffer (maximum):

1 0 0 0 0 0 0

packet descriptor (average):

0 0 0 0 0 0 0

packet descriptor (maximum):

0 0 0 0 0 0 0

packet descriptor (on-chip) (average):

1 1 1 1 1 1 1

packet descriptor (on-chip) (maximum):

26 2 2 2 2 2 2

Resource monitoring sampling data (per week):

CPU load (%) during last 13 weeks:

core 0 1 2 3 4 5 6 7

avg max avg max avg max avg max avg max avg max avg max avg max

lines 365-416 * * 2 9 4 10 2 8 2 8 2 8 2 8 2 8

* * 2 8 4 10 2 8 2 8 2 8 2 8 2 8

* * 2 7 4 8 2 6 2 7 2 7 2 7 2 7

* * 2 7 3 10 2 8 2 8 2 8 2 8 2 8

* * 2 7 3 9 2 7 2 7 2 7 2 7 2 7

* * 2 7 3 9 2 7 2 7 2 7 2 7 2 7

* * 2 7 4 9 2 7 2 7 2 7 2 7 2 7

* * 3 7 4 9 2 7 2 7 2 7 2 7 2 7

* * 2 8 3 8 2 7 2 6 2 6 2 7 2 6

* * 8 58 12 58 14 57 14 56 16 66 17 67 17 67

* * 7 30 11 34 13 43 13 46 16 53 16 54 16 53

* * 9 61 13 59 14 57 14 50 17 63 17 63 17 63

* * 14 65 19 69 23 72 23 72 28 79 28 79 28 79

core 8 9 10 11

avg max avg max avg max avg max

2 8 2 8 2 8 2 8

2 8 2 8 2 8 2 8

2 6 2 7 2 7 2 7

2 8 2 8 2 8 2 8

2 7 2 7 2 7 2 7

2 7 2 7 2 7 2 7

2 7 2 7 2 7 2 7

2 7 2 7 2 7 2 7

2 7 2 6 2 6 2 6

16 66 16 65 16 66 11 45

15 53 15 53 15 53 10 52

17 62 17 63 17 63 11 42

28 79 28 79 28 79 18 64

Resource utilization (%) during last 13 weeks:

session (average):

5 4 4 4 4 4 4 5 4 4 4 4 6

session (maximum):

11 9 8 8 8 8 9 9 8 8 7 8 13

packet buffer (average):

0 0 0 0 0 0 0 0 0 0 0 0 0

packet buffer (maximum):

0 0 0 0 0 0 0 0 0 1 1 1 2

packet descriptor (average):

0 0 0 0 0 0 0 0 0 0 0 0 0

packet descriptor (maximum):

0 0 0 0 0 0 0 0 0 0 0 1 1

packet descriptor (on-chip) (average):

1 1 1 1 1 1 1 1 1 1 1 1 1

packet descriptor (on-chip) (maximum):

2 2 2 2 2 2 2 2 2 20 16 36 52

DP dp1:

Resource monitoring sampling data (per second):

CPU load sampling by group:

lines 417-468 flow_lookup : 46%

flow_fastpath : 44%

flow_slowpath : 46%

flow_forwarding : 46%

flow_mgmt : 27%

flow_ctrl : 28%

nac_result : 50%

flow_np : 44%

dfa_result : 50%

module_internal : 46%

aho_result : 53%

zip_result : 50%

pktlog_forwarding : 28%

lwm : 0%

flow_host : 48%

CPU load (%) during last 60 seconds:

core 0 1 2 3 4 5 6 7 8 9 10 11

* 28 30 46 46 58 58 59 58 58 59 30

* 27 28 40 41 53 52 53 51 52 52 28

* 27 29 41 42 54 53 54 53 54 53 29

* 26 28 41 41 54 53 53 53 53 54 28

* 26 27 39 39 51 51 51 52 51 51 27

* 27 28 41 41 54 53 53 52 52 53 28

* 28 29 45 44 57 56 57 56 56 56 29

* 26 27 42 42 54 54 54 54 53 54 27

* 24 26 39 39 50 51 51 51 50 51 24

* 26 27 42 42 54 55 55 54 53 54 26

* 32 35 51 51 64 64 65 63 63 64 35

* 33 34 52 52 64 63 63 63 63 63 34

* 30 32 47 47 60 58 59 58 58 59 31

* 27 29 43 43 55 54 55 55 56 55 28

* 24 26 41 40 52 52 53 52 54 52 24

* 26 28 43 43 55 56 56 56 55 55 27

* 25 28 41 42 54 54 55 54 53 54 26

* 25 27 40 41 53 54 54 52 53 54 26

* 28 29 44 45 57 58 57 56 57 58 29

* 29 31 46 46 58 58 58 57 57 59 30

* 29 29 45 43 56 56 55 55 55 55 28

* 27 27 44 43 56 54 55 55 54 54 27

* 22 23 36 37 48 47 48 48 47 47 22

* 18 19 30 30 41 41 41 40 41 41 18

* 19 20 30 30 42 43 43 41 43 42 19

* 24 26 37 37 51 51 51 50 51 51 25

* 30 31 44 45 58 58 58 57 58 57 31

* 28 29 42 42 54 54 54 54 55 53 28

* 25 27 39 40 52 53 52 51 53 52 27

* 27 30 42 43 57 58 58 58 58 58 30

* 31 34 48 48 65 64 64 64 65 64 34

* 32 34 49 48 65 66 65 65 65 64 34

* 30 31 45 45 60 61 61 60 60 61 31

* 30 31 45 44 59 59 58 59 58 59 30

lines 469-520 * 29 30 44 43 58 59 58 58 57 58 29

* 37 40 55 54 68 69 68 68 69 68 39

* 36 39 55 54 68 67 68 67 67 67 38

* 30 31 46 46 59 58 59 58 59 60 31

* 30 31 44 44 58 56 57 57 57 57 30

* 36 38 51 52 64 64 64 64 63 63 37

* 37 40 56 55 69 68 68 68 67 68 40

* 30 32 47 47 59 59 59 58 59 59 32

* 29 31 44 44 56 56 57 56 56 55 30

* 31 32 45 45 58 58 58 58 58 57 32

* 28 29 42 41 54 54 54 54 55 54 28

* 25 26 38 37 49 50 50 50 50 50 25

* 24 25 36 35 48 48 48 49 48 47 25

* 26 28 39 38 53 53 53 54 54 52 27

* 31 31 44 43 57 57 57 57 58 57 31

* 28 30 41 42 54 53 53 53 53 53 29

* 25 27 39 39 51 51 51 50 50 49 27

* 26 27 39 38 51 50 51 51 51 50 27

* 23 24 36 35 47 47 48 47 48 49 24

* 21 23 36 36 47 48 49 48 48 48 23

* 24 25 38 38 50 50 50 49 49 49 25

* 27 28 41 41 53 54 53 52 53 53 27

* 25 28 41 42 54 54 54 53 53 54 27

* 27 29 44 44 56 56 56 56 55 56 29

* 30 33 49 49 61 61 62 62 61 62 33

* 28 30 46 46 58 59 59 58 58 59 31

Resource utilization (%) during last 60 seconds:

session:

5 5 5 5 5 5 5 5 5 5 5 5 5 5 5

5 5 5 5 5 5 5 5 5 5 5 5 5 5 5

5 5 5 5 5 5 5 5 5 5 5 5 5 5 5

5 5 5 5 5 5 5 5 5 5 5 5 5 5 5

packet buffer:

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

packet descriptor:

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 0 0 0 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

packet descriptor (on-chip):

1 2 2 2 2 2 2 2 2 2 2 2 2 2 2

2 2 2 2 2 2 2 2 2 2 2 2 1 2 2

2 2 2 2 1 1 2 2 2 2 2 2 1 2 2

2 2 1 2 2 2 2 2 2 2 2 2 2 2 2

lines 521-572

Resource monitoring sampling data (per minute):

CPU load (%) during last 60 minutes:

core 0 1 2 3 4 5 6 7

avg max avg max avg max avg max avg max avg max avg max avg max

* * 29 43 31 46 45 63 45 63 58 75 58 75 58 75

* * 28 38 30 41 45 59 44 58 57 71 57 71 57 71

* * 28 38 30 42 44 58 44 58 57 70 57 71 57 69

* * 25 37 27 39 40 56 40 55 52 68 52 69 52 69

* * 27 37 29 41 44 60 43 59 56 72 56 72 56 72

* * 27 37 29 39 42 56 42 56 54 68 55 68 54 68

* * 25 41 27 43 40 61 40 60 52 72 52 73 52 72

* * 24 41 26 43 39 61 39 61 51 73 51 73 51 73

* * 25 34 27 36 40 52 40 51 52 63 52 63 52 62

* * 24 31 26 33 38 47 38 47 50 60 50 60 50 60

* * 27 39 29 42 43 58 42 58 55 70 55 70 55 70

* * 25 36 27 39 39 56 39 56 51 67 51 68 51 67

* * 27 39 29 42 43 59 42 59 55 72 55 71 55 72

* * 27 38 29 39 43 57 43 56 55 68 55 68 55 69

* * 25 36 27 38 41 56 41 55 54 67 54 68 54 67

* * 29 44 31 46 46 65 46 65 58 77 58 77 58 77

* * 30 46 32 49 48 68 48 68 60 79 60 79 60 79

* * 30 53 31 56 46 72 46 72 58 82 58 82 58 81

* * 34 51 36 54 52 72 52 73 64 83 64 82 63 82

* * 30 59 31 63 46 78 46 78 58 86 58 86 58 85

* * 30 50 31 53 46 70 46 70 58 80 58 80 58 81

* * 29 38 30 42 44 58 44 59 57 69 57 70 57 70

* * 29 44 30 48 45 66 45 65 58 76 58 76 58 76

* * 26 34 27 37 40 55 40 54 52 66 52 66 52 66

* * 31 42 33 45 47 61 46 62 59 74 59 74 59 74

* * 29 42 31 45 47 64 47 64 60 76 59 75 59 76

* * 29 41 31 44 48 62 48 62 60 75 60 75 61 75

* * 28 42 30 46 44 63 44 63 57 75 57 76 57 75

* * 28 40 30 42 45 62 45 62 57 74 57 74 57 75

* * 27 38 29 40 43 56 43 56 55 68 56 68 56 69

* * 26 34 28 37 42 58 42 57 54 69 54 69 54 69

* * 26 45 28 48 41 63 41 62 53 74 53 74 53 74

* * 22 30 23 33 35 48 35 48 46 59 47 59 47 60

* * 22 33 23 37 34 50 33 51 45 63 45 63 45 63

* * 21 32 22 34 33 48 33 48 44 60 45 60 45 61

* * 23 34 25 36 38 54 38 54 50 65 50 66 50 67

* * 23 32 25 34 37 51 37 50 49 63 49 63 49 62

* * 23 36 25 39 37 56 36 55 49 69 49 69 49 69

* * 23 33 25 36 37 53 38 52 49 63 49 64 49 63

* * 22 29 23 31 36 50 36 49 47 61 47 61 47 61

* * 22 35 24 38 36 55 35 54 47 68 47 68 47 68

* * 23 33 25 35 38 53 38 53 49 65 49 66 50 66

* * 25 35 27 37 41 52 41 52 53 64 53 64 53 64

* * 26 45 27 48 42 64 41 63 53 74 53 75 53 75

* * 30 39 32 42 47 59 46 59 58 71 58 71 58 71

* * 28 42 30 46 43 60 43 60 56 72 56 72 56 71

lines 573-624 * * 28 42 30 45 44 62 44 61 57 74 57 73 57 73

* * 34 53 36 56 52 74 52 74 64 83 64 82 64 83

* * 33 58 36 62 50 75 50 75 62 84 62 85 62 84

* * 32 52 34 56 49 74 49 74 61 83 61 82 61 83

* * 36 55 38 58 54 76 54 75 66 84 66 84 66 85

* * 29 48 31 50 46 65 46 65 58 76 58 77 58 77

* * 29 42 31 45 46 61 46 61 58 73 58 73 58 73

* * 30 43 32 47 47 65 46 65 59 77 59 76 59 77

* * 28 42 29 45 43 63 43 63 55 74 55 74 56 75

* * 28 41 30 44 44 59 44 58 57 71 57 71 57 71

* * 29 45 31 47 45 66 45 65 58 78 58 77 58 77

* * 33 54 35 57 51 77 51 76 63 86 63 85 63 86

* * 30 43 32 47 46 62 45 62 58 73 58 73 58 72

* * 29 48 31 50 45 69 45 70 58 81 58 80 58 80

core 8 9 10 11

avg max avg max avg max avg max

58 75 58 75 58 75 30 46

57 72 57 72 57 72 29 41

57 70 56 70 57 70 29 41

52 68 52 68 52 68 26 40

55 72 55 72 56 71 29 41

54 68 54 68 54 68 28 39

52 73 52 72 52 72 27 43

51 73 51 73 51 72 25 43

52 63 52 63 52 63 26 35

50 61 50 60 50 60 25 32

55 70 55 69 55 69 28 42

51 67 50 67 51 67 26 38

54 72 54 71 54 72 28 41

54 68 54 68 55 68 29 39

54 67 53 67 53 66 26 38

58 77 58 77 58 77 30 47

60 79 60 79 60 78 31 49

58 82 58 82 58 82 31 56

63 83 63 83 63 83 36 54

58 85 57 85 57 85 31 63

58 80 58 80 58 80 31 53

57 71 57 70 57 70 30 41

57 76 57 76 57 75 30 47

52 67 52 66 52 66 27 37

58 73 59 73 59 74 33 45

59 75 59 76 59 76 31 44

60 74 60 75 60 75 30 43

57 76 57 75 57 75 30 45

57 75 57 74 57 74 30 43

55 68 55 68 55 68 29 39

53 69 54 69 53 68 27 36

53 74 52 74 53 73 28 48

46 59 46 60 46 59 23 32

45 63 45 63 45 62 23 35

44 60 44 60 44 59 22 34

50 66 50 66 50 66 25 36

lines 625-676 49 63 49 63 49 63 24 34

49 68 49 68 49 69 24 38

49 64 49 64 49 64 25 36

47 61 47 61 47 61 23 32

46 67 46 67 46 68 23 38

49 66 49 65 49 65 25 35

53 64 53 64 53 64 27 36

53 74 52 74 53 74 27 48

58 71 58 71 58 71 31 42

55 71 55 71 55 71 30 46

57 72 57 73 57 73 30 45

64 83 64 83 64 83 36 56

62 84 62 84 62 84 35 63

61 82 60 82 61 83 34 56

66 85 66 85 66 85 38 59

58 76 58 76 58 77 31 50

58 73 58 73 58 73 31 44

59 76 59 76 59 76 32 47

55 74 55 74 55 74 29 45

57 70 57 71 57 70 30 45

57 77 57 77 57 77 30 48

63 86 63 85 63 85 35 58

58 73 57 72 58 73 32 47

58 81 58 81 58 80 31 51

Resource utilization (%) during last 60 minutes:

session (average):

5 5 5 5 5 5 5 4 4 5 5 5 5 5 5

5 5 5 5 5 5 5 5 5 5 5 5 5 5 4

4 5 4 4 4 4 4 4 4 4 4 4 4 5 5

5 5 5 5 5 5 5 5 5 5 5 5 5 5 5

session (maximum):

5 5 5 5 5 5 5 5 5 5 5 5 5 5 5

6 5 6 5 6 6 5 5 5 5 5 5 5 5 5

4 5 5 4 4 4 4 4 4 4 4 5 5 5 5

5 5 6 6 6 5 5 5 5 5 5 5 6 5 5

packet buffer (average):

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

packet buffer (maximum):

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

packet descriptor (average):

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

lines 677-728 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 0 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

packet descriptor (maximum):

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

packet descriptor (on-chip) (average):

2 2 2 2 2 2 2 2 2 2 2 2 2 2 2

2 2 2 2 2 2 2 2 2 2 2 2 2 2 2

2 2 2 2 2 2 2 2 2 2 2 2 2 2 2

2 2 2 2 2 2 2 2 2 2 2 2 2 2 2

packet descriptor (on-chip) (maximum):

2 2 3 2 2 3 2 2 2 4 3 2 2 2 2

2 2 2 2 2 2 2 2 2 3 3 2 2 3 3

4 2 2 2 2 2 2 2 2 2 2 2 2 3 2

2 2 2 2 2 3 2 2 2 2 2 3 2 2 2

Resource monitoring sampling data (per hour):

CPU load (%) during last 24 hours:

core 0 1 2 3 4 5 6 7

avg max avg max avg max avg max avg max avg max avg max avg max

* * 28 59 30 63 43 78 43 78 55 86 55 86 56 86

* * 28 62 30 66 44 84 44 85 56 91 56 91 56 91

* * 19 63 20 67 31 87 31 87 41 93 41 92 41 92

* * 12 42 14 45 24 67 24 66 30 75 31 75 31 74

* * 10 22 11 24 15 48 15 48 20 58 20 58 20 58

* * 10 34 11 34 15 37 15 37 20 42 20 42 20 42

* * 9 16 10 18 15 33 15 32 20 40 20 40 20 41

* * 10 28 11 29 18 44 18 44 25 62 25 64 25 64

* * 10 25 12 25 20 38 20 39 26 49 26 49 26 49

* * 9 20 10 21 16 31 16 30 22 42 22 42 22 42

* * 8 35 9 36 14 43 14 44 20 52 21 52 21 51

* * 8 43 10 43 18 48 17 48 24 55 24 54 24 54

* * 9 32 10 34 17 49 17 49 23 57 24 58 23 59

* * 9 43 10 41 16 57 16 56 22 68 22 68 22 68

* * 9 24 10 25 17 36 17 36 24 50 24 49 24 49

* * 8 18 10 19 17 37 17 38 25 51 25 50 25 51

* * 13 53 14 53 24 64 24 63 33 73 33 71 33 70

* * 21 52 22 57 36 76 36 76 48 86 48 87 48 86

* * 24 57 26 60 42 81 41 81 54 89 54 88 54 89

* * 21 47 22 50 37 77 37 76 49 85 49 86 49 86

* * 18 45 19 48 32 75 32 75 44 89 44 89 44 88

* * 11 48 12 48 18 71 18 71 24 82 24 81 24 81

* * 2 3 4 7 2 5 2 5 2 5 2 5 2 5

* * 2 3 4 6 2 4 2 4 2 4 2 4 2 4

lines 729-780 core 8 9 10 11

avg max avg max avg max avg max

55 86 55 85 55 85 29 63

55 91 55 91 55 91 29 66

41 92 40 92 40 92 19 67

30 74 30 74 30 74 12 44

20 57 19 58 19 57 9 22

20 42 20 41 20 41 9 30

20 41 20 40 20 41 9 16

25 63 25 63 25 62 10 26

26 49 26 48 26 48 10 23

21 42 21 42 21 42 9 19

20 51 20 52 20 50 7 32

24 54 24 53 24 54 8 36

23 58 23 58 23 58 9 32

22 68 22 68 22 68 8 37

24 49 24 49 24 49 8 21

25 53 25 54 25 52 8 17

33 69 33 68 33 68 13 41

48 86 48 86 48 85 22 56

54 88 54 89 54 88 26 61

49 85 49 86 49 86 22 52

44 89 44 90 44 90 19 48

24 82 24 81 24 82 11 48

2 5 2 5 2 5 2 5

2 4 2 4 2 4 2 4

Resource utilization (%) during last 24 hours:

session (average):

5 5 3 2 2 2 2 2 2 2 2 2 2 2 2

2 3 4 5 4 4 4 4 4

session (maximum):

6 6 5 3 2 2 2 2 2 2 2 2 2 2 3

3 4 5 6 5 5 5 5 5

packet buffer (average):

1 1 1 0 0 0 0 0 0 0 0 0 0 0 0

0 1 1 1 1 1 0 0 0

packet buffer (maximum):

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

1 1 2 1 1 1 2 0 0

packet descriptor (average):

1 1 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 1 1 0 0 0 0 0

packet descriptor (maximum):

1 1 1 1 1 1 1 0 0 0 1 1 1 0 1

1 1 1 1 1 1 1 0 0

packet descriptor (on-chip) (average):

2 2 2 2 1 1 1 2 2 2 2 2 2 2 2

1 2 2 2 2 2 1 1 1

packet descriptor (on-chip) (maximum):

4 13 14 4 6 3 2 3 5 4 3 5 4 7 3

3 14 4 3 3 17 6 2 2

lines 781-832

Resource monitoring sampling data (per day):

CPU load (%) during last 7 days:

core 0 1 2 3 4 5 6 7

avg max avg max avg max avg max avg max avg max avg max avg max

* * 7 57 9 60 13 81 13 81 17 89 17 89 17 89

* * 1 2 3 5 1 3 1 3 1 3 1 3 1 3

* * 1 3 3 7 1 5 1 5 1 5 1 5 1 5

* * 1 3 3 7 1 5 1 4 1 5 1 5 1 5

* * 1 2 3 4 1 2 1 2 1 2 1 2 1 2

* * 1 2 3 4 1 2 1 2 1 2 1 2 1 2

* * 1 3 3 7 1 4 1 4 1 4 1 5 1 4

core 8 9 10 11

avg max avg max avg max avg max

17 89 17 90 17 90 7 61

1 3 1 3 1 3 1 3

1 5 1 4 1 4 1 4

1 4 1 5 1 4 1 4

1 2 1 2 1 2 1 2

1 2 1 2 1 2 1 2

1 4 1 5 1 4 1 4

Resource utilization (%) during last 7 days:

session (average):

3 2 2 2 1 1 2

session (maximum):

6 2 4 4 2 2 4

packet buffer (average):

0 0 0 0 0 0 0

packet buffer (maximum):

2 0 0 0 0 0 0

packet descriptor (average):

0 0 0 0 0 0 0

packet descriptor (maximum):

1 0 0 0 0 0 0

packet descriptor (on-chip) (average):

1 1 1 1 1 1 1

packet descriptor (on-chip) (maximum):

17 2 2 2 2 2 2

Resource monitoring sampling data (per week):

CPU load (%) during last 13 weeks:

core 0 1 2 3 4 5 6 7

avg max avg max avg max avg max avg max avg max avg max avg max

* * 1 3 3 7 1 5 1 5 1 5 1 5 1 5

* * 1 3 3 6 1 4 1 4 1 4 1 5 1 4

* * 1 2 3 7 1 5 1 5 1 5 1 5 1 5

* * 1 3 3 8 1 6 1 6 1 6 1 6 1 6

* * 1 3 3 7 1 4 1 4 1 4 1 4 1 4

* * 1 3 3 7 1 5 1 5 1 5 1 5 1 5

lines 833-884 * * 1 3 3 8 1 5 1 5 1 5 1 5 1 5

* * 1 4 3 7 1 5 1 5 1 5 1 5 1 5

* * 1 5 3 7 1 4 1 4 1 4 1 4 1 4

* * 6 66 7 76 11 69 11 68 15 78 15 78 15 79

* * 5 37 7 43 10 64 10 63 13 73 14 74 14 74

* * 6 64 7 72 11 81 11 81 15 90 15 90 15 90

* * 12 80 14 82 21 91 20 91 28 96 28 96 28 96

core 8 9 10 11

avg max avg max avg max avg max

1 5 1 5 1 5 1 5

1 4 1 6 1 4 1 4

1 5 1 5 1 5 1 5

1 6 1 6 1 6 1 7

1 5 1 4 1 4 1 4

1 5 1 5 1 5 1 5

1 5 1 5 1 5 1 5

1 5 1 5 1 5 1 5

1 4 1 4 1 4 1 4

14 77 14 78 14 77 6 47

13 73 13 74 13 74 5 41

15 90 15 90 15 90 6 49

28 96 28 96 28 96 13 76

Resource utilization (%) during last 13 weeks:

session (average):

2 2 2 1 1 2 2 2 2 2 2 1 3

session (maximum):

4 3 3 3 3 3 3 3 3 3 3 4 7

packet buffer (average):

0 0 0 0 0 0 0 0 0 0 0 0 1

packet buffer (maximum):

0 0 0 0 0 0 0 0 0 2 1 2 2

packet descriptor (average):

0 0 0 0 0 0 0 0 0 0 0 0 0

packet descriptor (maximum):

0 0 0 0 0 0 0 0 0 1 1 1 1

packet descriptor (on-chip) (average):

1 1 1 1 1 1 1 1 1 1 1 1 2

packet descriptor (on-chip) (maximum):

2 2 2 2 2 2 2 2 2 72 15 72 84

------------------------------------

show session info

target-dp: *.dp0

--------------------------------------------------------------------------------

Number of sessions supported: 2000000

Number of active sessions: 116741

Number of active TCP sessions: 84978

Number of active UDP sessions: 19980

Number of active ICMP sessions: 9810

Number of active BCAST sessions: 0

Number of active MCAST sessions: 0

Number of active predict sessions: 463

Session table utilization: 5%

Number of sessions created since bootup: 15143039136

Packet rate: 14128/s

Throughput: 29365 kbps

New connection establish rate: 3284 cps

--------------------------------------------------------------------------------

Session timeout

TCP default timeout: 14400 secs

TCP session timeout before SYN-ACK received: 5 secs

TCP session timeout before 3-way handshaking: 10 secs

TCP half-closed session timeout: 120 secs

TCP session timeout in TIME_WAIT: 15 secs

TCP session timeout for unverified RST: 30 secs

UDP default timeout: 30 secs

ICMP default timeout: 6 secs

other IP default timeout: 30 secs

Captive Portal session timeout: 30 secs

Session timeout in discard state:

TCP: 90 secs, UDP: 60 secs, other IP protocols: 60 secs

--------------------------------------------------------------------------------

Session accelerated aging: True

Accelerated aging threshold: 80% of utilization

Scaling factor: 2 X

--------------------------------------------------------------------------------

Session setup

TCP - reject non-SYN first packet: True

Hardware session offloading: True

IPv6 firewalling: True

Strict TCP/IP checksum: True

ICMP Unreachable Packet Rate: 200 pps

--------------------------------------------------------------------------------

Application trickling scan parameters:

Timeout to determine application trickling: 10 secs

Resource utilization threshold to start scan: 80%

Scan scaling factor over regular aging: 8

--------------------------------------------------------------------------------

Session behavior when resource limit is reached: drop

--------------------------------------------------------------------------------

Pcap token bucket rate : 10485760

--------------------------------------------------------------------------------

Max pending queued mcast packets per session : 0

lines 1-52 --------------------------------------------------------------------------------

Processing CPU: random

Broadcast first packet: yes

--------------------------------------------------------------------------------

------------------------------------------------------------

show counter global filter delta yes

Global counters:

Elapsed time since last sampling: 23.897 seconds

name value rate severity category aspect description

--------------------------------------------------------------------------------

- Mark as New

- Subscribe to RSS Feed

- Permalink

03-16-2018 06:04 AM

pkt_recv 2941289 123084 info packet pktproc Packets received

pkt_recv_zero 1419953 59420 info packet pktproc Packets received from QoS 0

pkt_sent 2510432 105053 info packet pktproc Packets transmitted

pkt_outstanding -1 0 info packet pktproc Outstanding packet to be transmitted

pkt_alloc 2451120 102571 info packet resource Packets allocated

pkt_alloc_failure 1 0 warn packet resource Packet allocation error

pkt_stp_rcv 1116 46 info packet pktproc STP BPDU packets received

pkt_pvst_rcv 1116 46 info packet pktproc PVST+ BPDU packets received

session_allocated 152906 6398 info session resource Sessions allocated

session_freed 151892 6355 info session resource Sessions freed

session_installed 116183 4861 info session resource Sessions installed

session_predict_dst -9 0 info session resource Active dst predict sessions

session_discard 7577 316 info session resource Session set to discard by security policy check

session_install_error 1 0 warn session pktproc Sessions installation error

session_install_error_s2c 1 0 warn session pktproc Sessions installation error s2c

session_state_error 6 0 drop session pktproc Session state error

session_unverified_rst 7098 296 info session pktproc Session aging timer modified by unverified RST

session_inter_cpu_refresh_msg_rcv 173759 7271 info session pktproc Inter-DP session refresh msg recevied

session_inter_cpu_refresh_msg_snd 173759 7271 info session pktproc Inter-DP session refresh msg sent

session_inter_cpu_teardown_msg_rcv 31773 1329 info session pktproc Inter-DP session teardown msg recevied

session_inter_cpu_teardown_msg_snd 31773 1329 info session pktproc Inter-DP session teardown msg sent

session_dup_pkt_drop 1165 48 drop session resource Duplicate packet: Applies only for multi-DP platform with hardware (Tiger

) broadcasting pkt to all DPs

session_tcp_reuse_too_fast 7 0 error session resource TCP session being reused too fast that races with the TCP CLOSE_WAIT stat

e machine

session_hash_insert_duplicate 1 0 warn session pktproc Session setup: hash insert failure due to duplicate entry

session_flow_hash_not_found_teardown 18977 794 warn session pktproc Session setup: flow hash not found during session teardown

session_query_flow_not_found 5 0 error session pktproc Session status query: flow no found

session_query_nack_mismatch 1 0 error session pktproc Session status nack: flow not found

flow_schedule_desched 54 1 info flow pktproc schedule delayed desched

flow_run_desched 54 1 info flow pktproc run delayed desched

flow_rcv_dot1q_tag_err 1682 70 drop flow parse Packets dropped: 802.1q tag not configured

flow_no_interface 1682 70 drop flow parse Packets dropped: invalid interface

flow_np_pkt_rcv 1930311 80777 info flow offload Packets received from offload processor

flow_np_pkt_xmt 2218525 92838 info flow offload Packets transmitted to offload processor

flow_policy_nofwd 98 4 drop flow session Session setup: no destination zone from forwarding

flow_policy_deny 1439 60 drop flow session Session setup: denied by policy

flow_tcp_non_syn 660 27 info flow session Non-SYN TCP packets without session match

flow_tcp_non_syn_drop 660 27 drop flow session Packets dropped: non-SYN TCP without session match

flow_fwd_l3_bcast_drop 4 0 drop flow forward Packets dropped: unhandled IP broadcast

flow_fwd_l3_mcast_drop 389 16 drop flow forward Packets dropped: no route for IP multicast

flow_fwd_notopology 5 0 drop flow forward Packets dropped: no forwarding configured on interface

flow_fwd_mtu_exceeded 1515 62 info flow forward Packets lengths exceeded MTU

flow_fwd_ip_df 12 0 drop flow forward Packets dropped: exceeded MTU but DF bit present

flow_parse_teardrop 10 0 drop flow parse Packets dropped: teardrop attack

flow_parse_unmatched_icmperr 4 0 info flow parse Packets dropped: Unmatched ICMP error message

lines 1-50 flow_dos_icmp_replyneedfrag 12 0 warn flow dos Packets dropped: Unsuprressed ICMP Need Fragmentation

flow_dos_pf_ping0 24 1 drop flow dos Packets dropped: Zone protection option 'discard-icmp-ping-zero-id'

flow_dos_pf_icmperr 14 0 drop flow dos Packets dropped: Zone protection option 'discard-icmp-error'

flow_dos_pf_strictip 10 0 drop flow dos Packets dropped: Zone protection option 'strict-ip-check'

flow_dos_rule_allow_under_rate 60 2 info flow dos Packets allowed: Rate within thresholds of DoS policy

flow_dos_rule_match 60 2 info flow dos Packets matched DoS policy

flow_dos_rule_nomatch 39295 1644 info flow dos Packets not matched DoS policy

flow_dos_ag_curr_sess_add_incr 59 2 info flow dos Incremented aggregate current session count on session create

flow_dos_ag_curr_sess_del_decr 87 3 info flow dos Decremented aggregate current session count on session delete

flow_dos_cl_curr_sess_add_incr 59 2 info flow dos Incremented classified current session count on session create

flow_dos_cl_curr_sess_del_decr 87 3 info flow dos Decremented classified current session count on session delete

flow_dos_ag_buckets_upd 96 4 info flow dos Updated aggregate buckets for aging

flow_ipfrag_recv 3718 155 info flow ipfrag IP fragments received

flow_ipfrag_free 1905 79 info flow ipfrag IP fragments freed after defragmentation

flow_ipfrag_merge 1813 75 info flow ipfrag IP defragmentation completed

flow_ipfrag_swbuf 1132 47 info flow ipfrag Software buffers allocated for reassembled IP packet

flow_ipfrag_frag 3756 156 info flow ipfrag IP fragments transmitted

flow_ipfrag_large_pkt_recv 6 0 info flow ipfrag IP fragment large packet(>16k) received

flow_action_predict 696 29 info flow pktproc Predict sessions created

flow_predict_match 690 28 info flow pktproc Packets matched predict session

flow_predict_session 689 28 info flow session Sessions created via predict

flow_predict_session_dup 192 8 info flow session Duplicate Predict Session installation attempts

flow_action_close 7566 316 drop flow pktproc TCP sessions closed via injecting RST

flow_arp_pkt_rcv 135 5 info flow arp ARP packets received

flow_arp_pkt_xmt 315 13 info flow arp ARP packets transmitted

flow_arp_pkt_replied 1 0 info flow arp ARP requests replied

flow_arp_rcv_gratuitous 130 5 info flow arp Gratuitous ARP packets received

flow_arp_rcv_err 1 0 drop flow arp ARP receive error

flow_arp_resolve_xmt 2 0 info flow arp ARP resolution packets transmitted

flow_host_pkt_rcv 2614 109 info flow mgmt Packets received from control plane

flow_host_pkt_xmt 20439 854 info flow mgmt Packets transmitted to control plane

flow_host_xmt_err 1 0 drop flow mgmt Packets dropped: transmit error to control plane

flow_host_service_allow 1218 50 info flow mgmt Device management session allowed

flow_host_service_deny 73 3 drop flow mgmt Device management session denied

flow_host_vardata_rate_limit_ok 12 0 info flow mgmt Host vardata not sent: rate limit ok

flow_health_monitor_rcv 240 10 info flow mgmt Health monitoring packet received

flow_health_monitor_xmt 240 10 info flow mgmt Health monitoring packet transmitted

flow_tunnel_activate 21 0 info flow tunnel Number of packets that triggerred tunnel activation

flow_tunnel_encap_err 2 0 drop flow tunnel Packet dropped: tunnel encapsulation error

flow_tunnel_decap_err 10 0 drop flow tunnel Packet dropped: tunnel decapsulation error

flow_tunnel_ipsec_ip_check 3 0 warn flow tunnel Decrypted tunnel inner packet has tunnel end-point IP address

flow_tunnel_cache_resolve 2015 84 info flow tunnel tunnel structure lookup resolved using session cache

flow_tunnel_encap_resolve 140266 5869 info flow tunnel tunnel structure lookup resolve

flow_tunnel_encap_resolve_mt 2404 100 info flow tunnel Multi-tunnel structure lookup resolve

flow_tunnel_proxyid_resolve 387 16 info flow tunnel Multi-tunnel structure resolved by proxy-id match

flow_session_setup_msg_recv 64688 2707 info flow pktproc Flow msg: session setup messages received

flow_session_refresh_msg_recv 173758 7271 info flow pktproc Flow msg: session refresh messages received

flow_session_teardown_msg_recv 31773 1329 info flow pktproc Flow msg: session remove messages received

flow_predict_add_msg_recv 656 27 info flow pktproc Flow msg: session predict messages received

flow_predict_add_reply_msg_recv 583 24 info flow pktproc Flow msg: session predict reply messages received

flow_predict_request_ack_msg_recv 583 24 info flow pktproc Flow msg: parent session require ack for predict messages received

flow_predict_convert_msg_recv 1078 45 info flow pktproc Flow msg: predict convert messages received

lines 51-102 flow_appinfo2ip_insert_msg_recv 171 6 info flow pktproc Flow msg: appinfo2ip insert messages received

flow_appidcache_update_msg_recv 130 4 info flow pktproc Flow msg: appid cache update messages received

flow_event_update_msg_recv 511 20 info flow pktproc Flow msg: event update messages received

flow_smlcache_update_msg_recv 89606 3748 info flow pktproc Flow msg: sml cache update messages received

flow_url_category_msg_recv 2 0 info flow pktproc Flow msg: url category messages received

flow_appid_detect_msg_recv 3713 154 info flow pktproc Flow msg: appid detect update messages received

flow_session_status_query_msg_sent 5 0 info flow pktproc Flow msg: session status query messages sent

flow_session_status_query_msg_recv 5 0 info flow pktproc Flow msg: session status query messages received

flow_session_status_nack_msg_sent 5 0 info flow pktproc Flow msg: session status nack messages sent

flow_session_status_nack_msg_recv 5 0 info flow pktproc Flow msg: session status nack messages received

flow_msg_proc_err 6 0 error flow pktproc Flow msg: Fail to process msg received

flow_msg_appinfo2ip_insert_sent 171 6 info flow pktproc Flow msg: appinfo2ip insert sent

flow_msg_appinfo2ip_insert_recv 171 6 info flow pktproc Flow msg: appinfo2ip insert received

flow_inter_cpu_pkt_fwd 187301 7837 info flow pktproc Pkt forwarded to other CPU

flow_inter_cpu_msg_fwd 334382 13992 info flow pktproc Msg forwarded to other CPU

flow_sfpwq_rcv 49654 2077 info flow offload Session first packet wait queue: packets received

flow_sfpwq_enque 49551 2073 info flow offload Session first packet wait queue: packets enqueued

flow_sfpwq_deque 31254 1307 info flow offload Session first packet wait queue: packets dequeued

flow_sfpwq_enque_skip 23 0 info flow offload Session first packet wait queue: enqueue operation skipped

flow_sfpwq_deque_nomatch 2799 117 info flow offload Session first packet wait queue: dequeue operation returns no match

flow_sfpwq_age 18364 768 info flow offload Session first packet wait queue: packets deleted by ager

flow_sfpwq_err_enque 86 3 error flow offload Session first packet wait queue: enquaue error

flow_sfpwq_enque_no_sess_pkt 49637 2077 info flow offload Session first packet wait queue: packets w/o session being found

flow_rcv_inter_cpu 100532 4207 info flow offload inter-cpu packets received

flow_msg_rcv_inter_cpu 334383 13992 info flow offload inter-cpu message received

flow_netmsg_send 25 1 info flow pktproc netmsg sent

flow_fpga_rcv_igr_IPSIPERR 46 1 info flow offload FPGA IGR Exception: IPSIPERR

flow_fpga_rcv_igr_FRAGERR 3012 126 info flow offload FPGA IGR Exception: FRAGERR

flow_fpga_rcv_igr_INTFNOTFOUND 1687 70 info flow offload FPGA IGR Exception: INTFNOTFOUND

flow_fpga_rcv_egr_L3_REC_NOEND 16 0 info flow offload FPGA EGR Exception: L3_REC_NOEND

flow_fpga_flow_insert 95364 3990 info flow offload fpga flow insert transactions

flow_fpga_flow_delete 49802 2083 info flow offload fpga flow delete transactions

flow_fpga_flow_update 146745 6140 info flow offload fpga flow update transaction

flow_fpga_rcv_stats 170276 7124 info flow offload fpga session refresh/stats message received

flow_fpga_rcv_sess_remove 3092 129 info flow offload fpga session remove message received

flow_fpga_rcv_forwarding 16 0 info flow offload fpga packets for forwarding received

flow_fpga_rcv_slowpath 234843 9827 info flow offload fpga packets slowpath received

flow_fpga_rcv_fastpath 1048930 43894 info flow offload fpga packets for fastpath received

flow_fpga_delete_ack_c2s_dp0 49120 2055 info flow offload tiger delete ack received for c2s flow on dp0

flow_fpga_delete_ack_s2c_dp0 49120 2055 info flow offload tiger delete ack received for s2c flow on dp0

flow_tcp_cksm_sw_validation 28070 1174 info flow pktproc Packets for which TCP checksum validation was done in software

appid_override 33 1 info appid pktproc Application identified by override rule

appid_ident_by_icmp 33487 1401 info appid pktproc Application identified by icmp type

appid_ident_by_simple_sig 2182 90 info appid pktproc Application identified by simple signature

appid_post_pkt_queued 3 0 info appid resource The total trailing packets queued in AIE

appid_ident_by_dport_first 7669 320 info appid pktproc Application identified by L4 dport first

appid_ident_by_dport 323 12 info appid pktproc Application identified by L4 dport

appid_proc 44483 1861 info appid pktproc The number of packets processed by Application identification

appid_unknown_max_pkts 22 0 info appid pktproc The number of unknown applications caused by max. packets reached

appid_unknown_udp 17 0 info appid pktproc The number of unknown UDP applications after app engine

appid_unknown_fini 144 5 info appid pktproc The number of unknown applications

appid_unknown_fini_empty 9029 377 info appid pktproc The number of unknown applications because of no data

lines 103-154 appid_reuse_parent_policy 681 28 info appid pktproc appid reuses parent info for child

nat_static_xlat 9137 381 info nat resource The total number of static NAT translate called

nat_static_release 8995 375 info nat resource The total number of static NAT release called

nat_dynamic_port_xlat 3808 159 info nat resource The total number of dynamic_ip_port NAT translate called

nat_dynamic_port_release 4308 179 info nat resource The total number of dynamic_ip_port NAT release called

dfa_sw 83251 3482 info dfa pktproc The total number of dfa match using software

dfa_sw_min_threshold 69781 2919 info dfa offload Usage of software dfa caused by packet length min threshold

dfa_sw_max_threshold 17 0 info dfa offload Usage of software dfa caused by packet length max threshold

dfa_sw_fpga_not_loaded 13453 562 warn dfa offload Usage of software dfa caused by dfa not loaded into fpga

dfa_request -1 0 info dfa resource The dfa outstanding requests

dfa_fpga 446179 18670 info dfa offload The total requests to FPGA for dfa

dfa_fpga_data 304919219 12759974 info dfa offload The total data size to FPGA for dfa

dfa_session_change 13 0 info dfa offload when getting dfa result from offload, session was changed

tcp_case_1 1 0 info tcp pktproc tcp reassembly case 1

tcp_case_2 8596 359 info tcp pktproc tcp reassembly case 2

tcp_exceed_flow_seg_limit 5 0 warn tcp resource packets dropped due to the limitation on tcp out-of-order queue size

ctd_pkt_queued -28 0 info ctd resource The number of packets queued in ctd

ctd_sml_exit 500 20 info ctd pktproc The number of sessions with sml exit

ctd_sml_exit_detector_i 2249 93 info ctd pktproc The number of sessions with sml exit in detector i

appid_bypass_no_ctd 281 11 info appid pktproc appid bypass due to no ctd

ctd_tcp_bypass 5 0 info ctd pktproc session skip L7 proc in tcp reassembly

ctd_err_bypass 2749 114 info ctd pktproc ctd error bypass

ctd_err_sw 31 0 info ctd pktproc ctd sw error

ctd_switch_decoder 5 0 info ctd pktproc ctd switch decoder

ctd_stop_proc 11923 498 info ctd pktproc ctd stops to process packet

ctd_run_detector_i 14185 593 info ctd pktproc run detector_i

ctd_sml_vm_run_impl_opcodeexit 2744 114 info ctd pktproc SML VM opcode exit

ctd_sml_vm_run_impl_immed8000 1864 77 info ctd pktproc SML VM immed8000

ctd_decode_filter_QP 14 0 info ctd pktproc decode filter QP

ctd_sml_opcode_set_file_type 21537 900 info ctd pktproc sml opcode set file type

ctd_file_forward 6 0 info ctd pktproc The number of file forward found

ctd_token_match_overflow 1781 74 info ctd pktproc The token match overflow

ctd_filter_decode_failure_zip 1 0 error ctd pktproc Number of decode filter failure for zip

ctd_filter_decode_failure_qpdecode 14 0 error ctd pktproc Number of decode filter failure for qpdecode

ctd_bloom_filter_nohit 28160 1178 info ctd pktproc The number of no match for virus bloom filter

ctd_sml_cache_conflict 2 0 info ctd pktproc The number of sml cache conflict

ctd_fwd_session_init 10 0 info ctd pktproc Content forward: number of successful action init

ctd_fwd_session_send 1583 65 info ctd pktproc Content forward: number of successful action send

ctd_fwd_session_fini 10 0 info ctd pktproc Content forward: number of successful action fini

ctd_fwd_session_cancel_send 1 0 info ctd pktproc Content forward: number of cancel requests sent

ctd_fwd_session_with_emlinfo 4 0 info ctd pktproc Content forward: number of emlinfo meta data forwarded from sp

ctd_fwd_session_cancel_rcvr 3 0 info ctd pktproc Content forward: number of cancel requests received

ctd_fwd_err_tcp_state 1 0 info ctd pktproc Content forward error: TCP in establishment when session went away

ctd_fwd_session_hdr_init 4 0 info ctd pktproc Content forward: number of successful action init

fpga_pkt 1 0 info fpga resource The packets held because of requests to FPGA

aho_request 3 0 info aho resource The AHO outstanding requests

aho_fpga 564816 23635 info aho resource The total requests to FPGA for AHO

aho_fpga_data 381746135 15974974 info aho resource The total data size to FPGA for AHO

aho_fpga_state_verify_failed 19 0 info aho pktproc when getting result from fpga, session's state was changed

aho_match_overflow 1 0 info aho pktproc number of aho matches overflow

aho_too_many_matches 13 0 info aho pktproc too many signature matches within one packet

aho_too_many_mid_res 4 0 info aho pktproc too many signature middle results within one packet

lines 155-206 aho_sw_min_threshold 48403 2024 info aho pktproc Usage of software AHO caused by packet length min threshold

aho_sw_max_threshold 53 1 info aho pktproc Usage of software AHO caused by packet length max threshold

aho_sw_fpga_unavailable 27752 1160 warn aho pktproc Usage of software AHO caused by fpga unavailable

aho_sw 76208 3188 info aho pktproc The total usage of software for AHO

ctd_exceed_queue_limit 31 0 warn ctd resource The number of packets queued in ctd exceeds per session's limit, action b

ypass

ctd_predict_queue_deque 583 24 info ctd pktproc ctd predict queue deque

ctd_predict_queue_enque 583 24 info ctd pktproc ctd predict queue got enque due to predict waiting fpp

ctd_predict_ack_request_sent 583 24 info ctd pktproc ctd predict queue ack request sent

ctd_predict_ack_request_rcv 583 24 info ctd pktproc ctd predict queue ack request received

ctd_predict_ack_reply_sent 583 24 info ctd pktproc ctd predict queue ack reply sent

ctd_predict_ack_reply_rcv 583 24 info ctd pktproc ctd predict queue ack reply received

ctd_predict_ack_reply_error 1 0 drop ctd pktproc ctd predict queue ack reply error

ctd_appid_reassign 42831 1791 info ctd pktproc appid was changed

ctd_appid_reset 11917 498 info ctd pktproc go back to appid

ctd_decoder_reassign 5 0 info ctd pktproc decoder was changed

ctd_url_block 15135 632 info ctd pktproc sessions blocked by url filtering

ctd_process 86114 3602 info ctd pktproc session processed by ctd

ctd_pkt_slowpath 619834 25938 info ctd pktproc Packets processed by slowpath

ctd_dns_host_ip_match 71 2 info ctd pktproc Number of HOST name matches IP in DP DNS cache

ctd_dns_host_ip_no_cache 14 0 info ctd pktproc Number of HOST name that does not exist in DP DNS cache

ctd_dns_post_validate 14 0 info ctd pktproc Number of HOST name validation after host name packet

ctd_dns_request 5 0 info ctd pktproc Number of DNS request to MP

ctd_dns_response 5 0 info ctd pktproc Number of DNS response from MP

ha_msg_sent 489609 20488 info ha system HA: messages sent

ha_session_setup_msg_sent 92567 3873 info ha pktproc HA: session setup messages sent

ha_session_teardown_msg_sent 49800 2083 info ha pktproc HA: session teardown messages sent

ha_session_update_msg_sent 342836 14346 info ha pktproc HA: session update messages sent

ha_predict_add_msg_sent 696 29 info ha pktproc HA: predict session add messages sent

ha_predict_delete_msg_sent 705 29 info ha pktproc HA: predict session delete messages sent

ha_predict_update_msg_sent 2 0 info ha pktproc HA: predict session update messages sent

ha_arp_update_msg_sent 131 5 info ha pktproc HA: ARP update messages sent

ha_ipsec_update_msg_sent 2872 120 info ha pktproc HA: IPSec sequence number update messages sent

ha_sess_upd_notsent_unsyncable 37519 1570 info ha system HA session update message not sent: session not syncable

ha_sess_upd_notsent_flow 9089 380 info ha system HA session update message not sent: session type not flow

log_url_cnt 21430 896 info log system Number of url logs

log_urlcontent_cnt 7009 293 info log system Number of url content logs

log_url_req_cnt 28 0 info log system Number of url request logs

log_uid_req_cnt 12682 529 info log system Number of uid request logs

log_vulnerability_cnt 89 3 info log system Number of vulnerability logs

log_fileext_cnt 44 1 info log system Number of file block logs

log_traffic_cnt 59694 2497 info log system Number of traffic logs

log_netflow_cnt 7230 301 info log system Number of netflow records

log_pkt_diag_us 27 1 info log system Time (us) spend on writing packet-diag logs

log_http_hdr_cnt 400 16 info log system Number of HTTP hdr field logs

proxy_url_category_unknown 64 1 info proxy pktproc Number of sessions checked by proxy with unknown url category

uid_ipinfo_rcv 130 4 info uid pktproc Number of ip user info received

url_db_request 28 0 info url pktproc Number of URL database request

url_db_reply 48 1 info url pktproc Number of URL reply

url_request_pkt_drop 2 0 drop url pktproc The number of packets get dropped because of waiting for url category req

uest

url_session_not_in_wait 1 0 error url system The session is not waiting for url

lines 207-256 zip_process_total 765 32 info zip pktproc The total number of zip engine decompress process

zip_process_stop 1 0 info zip pktproc The number of zip decompress process stops lack of output buffer

zip_process_failure 1 0 info zip pktproc The number of failures for zip decompress process

zip_process_sw 1063 44 info zip pktproc The total number of zip software decompress process

zip_result_drop 1 0 warn zip pktproc The number of zip results dropped

zip_ctx_reuse 390 16 info zip pktproc The number of reuse of zip contexts

zip_ctx_free_race 1 0 info zip pktproc The number of attempted frees of active zip contexts

zip_hw_in 803797 33635 info zip pktproc The total input data size to hardware zip engine

zip_hw_out 3196593 133765 info zip pktproc The total output data size from hardware zip engine

tcp_modi_q_pkt_alloc 10 0 info tcp pktproc packets allocated by tcp modification queue

tcp_modi_q_pkt_free 10 0 info tcp pktproc packets freed by tcp modification queue

tcp_fin_q_pkt_alloc 2 0 info tcp pktproc packets allocated by tcp FIN queue

tcp_fin_q_pkt_free 2 0 info tcp pktproc packets freed by tcp FIN queue

pkt_nac_result 1010995 42306 info packet resource Packets entered module nac stage result

pkt_flow_np 1930250 80774 info packet resource Packets entered module flow stage np

--------------------------------------------------------------------------------

Total counters shown: 265

--------------------------------------------------------------------------------

show system statistics session

2018-03-16 11:27:32.540 +0100 Warning: pan_hash_init(pan_hash.c:112): nbuckets 100 is not power of 2!

2018-03-16 11:27:32.540 +0100 Warning: pan_hash_init(pan_hash.c:112): nbuckets 100 is not power of 2!

System Statistics: ('q' to quit, 'h' for help)

Device is up : 190 days 10 hours 24 mins 1 sec

Packet rate : 42484/s

Throughput : 111335 Kbps

Total active sessions : 116585

Active TCP sessions : 85556

Active UDP sessions : 19893

Active ICMP sessions : 9237

---------------------------------------------

debug dataplane poiool statistics

DP dp0:

Hardware Pools

[ 0] Packet Buffers : 57256/57344 0x8000000410000000

[ 1] Work Queue Entries : 229090/229376 0x8000000417000000

[ 2] Output Buffers : 1009/1024 0x8000000418c00000

[ 3] DFA Result : 4093/4096 0x8000000418d00000

[ 4] Timer Buffers : 4096/4096 0x8000000419100000

[ 5] PAN_FPA_LWM_POOL : 1024/1024 0x8000000419500000

[ 6] ZIP Commands : 1023/1024 0x8000000419540000

[ 7] PAN_FPA_BLAST_PO : 1024/1024 0x8000000419740000

Software Pools

[ 0] software packet buffer 0 ( 512): 32637/32768 0x800000002b105680

[ 1] software packet buffer 1 ( 1024): 32745/32768 0x800000002c125780

[ 2] software packet buffer 2 ( 2048): 32710/32768 0x800000002e145880

[ 3] software packet buffer 3 (33280): 24576/24576 0x8000000032165980

[ 4] software packet buffer 4 (66048): 304/304 0x8000000062d7da80

[ 5] Shared Pool 24 ( 24): 1028204/1060000 0x80000000640a5780

[ 6] Shared Pool 32 ( 32): 1095531/1115000 0x8000000065cf3a00

[ 7] Shared Pool 40 ( 40): 264997/265000 0x800000006833b800

[ 8] Shared Pool 192 ( 192): 1144180/1185000 0x8000000068e5a400

[ 9] Shared Pool 256 ( 256): 105000/105000 0x8000000076bda700

[10] ZIP Results ( 184): 1024/1024 0x800000041b4e2600

[11] CTD AV Block ( 1024): 32/32 0x800000041b606380

[12] Regex Results (11544): 4096/4096 0x800000041b62f100

[13] SSH Handshake State ( 6512): 128/128 0x800000041e54f280

[14] SSH State ( 3200): 1024/1024 0x800000041e61ad80

[15] TCP host connections ( 176): 15/16 0x800000041e93c000

Shared Pools Statistics

User Quota Threshold Min.Alloc Cur.Alloc Max.Alloc Total-Alloc Fail-Thresh Fail-Nomem Data(Pool)-SZ

fptcp_seg 49152 0 0 0 3419 59129744 0 0 16 (24)

inner_decode 7812 0 0 0 0 0 0 0 16 (24)

detector_threat 262144 0 0 31408 58484 14555257606 0 0 24 (24)

vm_vcheck 262144 0 0 0 649 24132223974 0 0 24 (24)

ctd_patmatch 500000 0 0 388 1773 1862835407 0 0 24 (24)

proxy_pktmr 11904 0 0 0 0 0 0 0 16 (24)

vm_field 1114112 0 0 19475 24382 81536719898 0 0 32 (32)

decode_filter 262144 0 0 3 159 90736654 0 0 40 (40)

appid_session 500000 0 0 117 802 982514767 0 0 104 (192)

appid_dfa_state 500000 0 0 186 1585 71329959 0 0 184 (192)

cpat_state 125000 948000 62500 0 0 0 0 0 184 (192)

ctd_flow 500000 0 0 23738 45414 4582010415 0 0 176 (192)

ctd_flow_state 500000 0 0 16651 39486 3773342679 0 0 176 (192)

ctd_dlp_flow 125000 829500 62500 128 602 50664423 0 0 192 (192)

proxy_flow 23808 0 0 0 408 738891 0 0 192 (192)

ssl_hs_st 11904 0 0 0 192 371216 0 0 192 (192)

ssl_key_block 23808 0 0 0 34 242453 0 0 192 (192)

lines 1-52 ssl_st 23808 0 0 0 204 371216 0 0 192 (192)

ssl_hs_mac 26188 0 0 0 147 1454612 0 0 variable

timer_chunk 95232 0 0 0 855 14948937 0 0 256 (256)

hash_decode 16384 0 0 0 2 346 0 0 104 (192)

Memory Pool Size 144400KB, start address 0x8000000000000000

alloc size 62804276, max 78691716

fixed buf allocator, size 147862744

sz allocator, page size 32768, max alloc 4096 quant 64

pool 0 element size 64 avail list 10 full list 13

pool 1 element size 128 avail list 2404 full list 87

pool 2 element size 192 avail list 948 full list 2

pool 3 element size 256 avail list 1 full list 5

pool 4 element size 320 avail list 8 full list 553

pool 5 element size 384 avail list 1 full list 0

pool 6 element size 448 avail list 1 full list 0

pool 7 element size 512 avail list 1 full list 0

pool 9 element size 640 avail list 1 full list 0

pool 11 element size 768 avail list 1 full list 0

pool 12 element size 832 avail list 1 full list 0

pool 14 element size 960 avail list 1 full list 0

pool 16 element size 1088 avail list 1 full list 0

pool 32 element size 2112 avail list 1 full list 0

pool 42 element size 2752 avail list 2 full list 0

parent allocator

alloc size 132479600, max 134216304

malloc allocator

current usage 132513792 max. usage 134250496, free chunks 468, total chunks 4512

Mem-Pool-Type MAX.SZ(B) Threshold Min.Alloc Cur.SZ(B) Cur.Alloc Total-Alloc Fail-Thresh Fail-Nomem Local-Reuse(cache)

ctd_dlp_buf 7936992 73933088 3968496 0 0 0 0 0 0 (0)

sml_regfile 108000000 0 0 4583304 63657 13117618417 0 0 598992891 (0)

proxy 71141558 0 0 287340 1981 3989081 0 0 363086 (11)

l7_data 2777088 0 0 13560 5 881816 0 0 402455 (8)

l7_misc 93208648 103506323 46604324 37758440 554819 1018151668 0 0 37289268 (11)

cfg_name_cache 566272 103506323 283136 33480 592 592 0 0 0 (0)

scantracker 491520 88719705 245760 491416 3233 61503687 30750227 0 30750227 (0)

appinfo 14155776 103506323 7077888 14155592 57079 460833 201877 0 201877 (0)

dns 2097152 103506323 1048576 4064 49 1261 0 0 166 (9)

Cache-Type MAX-Entries Cur-Entries Cur.SZ(B) Insert-Failure Mem-Pool-Type

ssl_server_cert 16384 16384 1310720 0 l7_misc

ssl_cert_cn 25000 25000 2056592 0 l7_misc

ssl_cert_cache 1024 2 128 0 proxy

ssl_sess_cache 6000 743 154544 0 proxy

proxy_exclude 1024 5 560 0 proxy

proxy_notify 8192 0 0 0 proxy

ctd_block_answer 16384 1 80 0 l7_misc

username_cache 4096 592 33480 0 cfg_name_cache

threatname_cache 4096 0 0 0 cfg_name_cache

hipname_cache 256 0 0 0 cfg_name_cache

ctd_cp 16384 0 0 0 l7_misc

lines 53-104 ctd_driveby 4096 1065 85200 0 l7_misc

ctd_pcap 1024 0 0 0 l7_misc

ctd_sml 8192 8192 393216 0 l7_misc

ctd_url 524288 499961 33271800 0 l7_misc

app_tracker 65536 120 18240 0 l7_misc

threat_tracker 4096 4096 622592 0 l7_misc

scan_tracker 4096 3233 491416 0 scantracker

app_info 65536 57079 14155592 0 appinfo

dns_v4 10000 47 3968 0 dns

dns_v6 10000 0 0 0 dns

dns_id 1024 2 96 0 dns

tcp_mcb 8256 0 0 0 l7_misc

DP dp1:

Hardware Pools

[ 0] Packet Buffers : 259313/262144 0x8000000020c00000

[ 1] Work Queue Entries : 488250/491520 0x8000000410000000

[ 2] Output Buffers : 1009/1024 0x8000000413c00000

[ 3] DFA Result : 4093/4096 0x8000000413d00000

[ 4] Timer Buffers : 4096/4096 0x8000000414100000

[ 5] PAN_FPA_LWM_POOL : 1024/1024 0x8000000414500000

[ 6] ZIP Commands : 1020/1024 0x8000000414540000

[ 7] PAN_FPA_BLAST_PO : 1024/1024 0x8000000414740000

Software Pools

[ 0] software packet buffer 0 ( 512): 48958/49152 0x80000000628d6680

[ 1] software packet buffer 1 ( 1024): 49104/49152 0x8000000064106780

[ 2] software packet buffer 2 ( 2048): 49040/49152 0x8000000067136880

[ 3] software packet buffer 3 (33280): 32667/32768 0x800000006d166980

[ 4] software packet buffer 4 (66048): 432/432 0x80000000ae186a80

[ 5] Shared Pool 24 ( 24): 3576354/3630000 0x80000000afcbe780

[ 6] Shared Pool 32 ( 32): 4166520/4200000 0x80000000b5dad000

[ 7] Shared Pool 40 ( 40): 1049988/1050000 0x80000000beddf200

[ 8] Shared Pool 192 ( 192): 4549907/4600000 0x80000000c19ee800

[ 9] Shared Pool 256 ( 256): 120000/120000 0x80000000f75c3c00

[10] ZIP Results ( 184): 924/1024 0x8000000417883600

[11] CTD AV Block ( 1024): 32/32 0x80000004179a7380

[12] Regex Results (11544): 4093/4096 0x80000004179d0100

[13] SSH Handshake State ( 6512): 128/128 0x800000041b8f0300

[14] SSH State ( 3200): 1024/1024 0x800000041b9bbe00

[15] TCP host connections ( 176): 15/16 0x800000041bcdd000

Shared Pools Statistics

User Quota Threshold Min.Alloc Cur.Alloc Max.Alloc Total-Alloc Fail-Thresh Fail-Nomem Data(Pool)-SZ

fptcp_seg 131072 0 0 0 1227 77490639 0 0 16 (24)

inner_decode 23437 0 0 0 0 0 0 0 16 (24)

detector_threat 1048576 0 0 52903 113868 17342026666 0 0 24 (24)

vm_vcheck 1048576 0 0 0 1354 26572129236 0 0 24 (24)

lines 105-156 ctd_patmatch 1500002 0 0 742 2552 2679556916 0 0 24 (24)

proxy_pktmr 35712 0 0 0 0 0 0 0 16 (24)

vm_field 4194304 0 0 33476 44418 102487126472 0 0 32 (32)

decode_filter 1048576 0 0 12 1340 128863078 0 0 40 (40)

appid_session 1500002 0 0 201 1026 1477586292 0 0 104 (192)

appid_dfa_state 1500002 0 0 330 1989 102906322 0 0 184 (192)

cpat_state 375000 3680000 187500 0 0 0 0 0 184 (192)

ctd_flow 1500002 0 0 30590 38964 2494810574 0 0 176 (192)

ctd_flow_state 1500002 0 0 18749 28275 1349386034 0 0 176 (192)

ctd_dlp_flow 375000 3220000 187500 223 846 67550736 0 0 192 (192)

proxy_flow 71424 0 0 0 52 14529 0 0 192 (192)

ssl_hs_st 35712 0 0 0 36 14538 0 0 192 (192)

ssl_key_block 71424 0 0 0 34 14351 0 0 192 (192)

ssl_st 71424 0 0 0 52 14538 0 0 192 (192)

ssl_hs_mac 78566 0 0 0 114 58080 0 0 variable

timer_chunk 95232 0 0 0 278 17243930 0 0 256 (256)

hash_decode 16384 0 0 0 2 574 0 0 104 (192)

Memory Pool Size 455509KB, start address 0x8000000000000000

alloc size 138963412, max 182349644

fixed buf allocator, size 466438584

sz allocator, page size 32768, max alloc 4096 quant 64

pool 0 element size 64 avail list 47 full list 18

pool 1 element size 128 avail list 6238 full list 144

pool 2 element size 192 avail list 2033 full list 0

pool 3 element size 256 avail list 1 full list 0

pool 4 element size 320 avail list 8 full list 554

pool 5 element size 384 avail list 1 full list 0

pool 6 element size 448 avail list 1 full list 0

pool 7 element size 512 avail list 1 full list 0

pool 9 element size 640 avail list 1 full list 0

pool 12 element size 832 avail list 1 full list 0

pool 14 element size 960 avail list 1 full list 0

pool 16 element size 1088 avail list 1 full list 0

pool 32 element size 2112 avail list 1 full list 0

pool 42 element size 2752 avail list 3 full list 0

parent allocator

alloc size 296712816, max 319158896

malloc allocator

current usage 296747008 max. usage 319193088, free chunks 5178, total chunks 14234

Mem-Pool-Type MAX.SZ(B) Threshold Min.Alloc Cur.SZ(B) Cur.Alloc Total-Alloc Fail-Thresh Fail-Nomem Local-Reuse(cache)

ctd_dlp_buf 23811992 233221008 11905996 0 0 0 0 0 0 (0)

sml_regfile 324000432 0 0 6078024 84417 6719528887 0 0 764782031 (3)

proxy 185249344 0 0 131228 1234 385983 0 0 7387 (11)

l7_data 2777088 0 0 13560 5 1246148 0 0 497502 (8)

l7_misc 220086344 326509411 110043172 104934272 1554843 1195183644 0 0 45059449 (11)

cfg_name_cache 566272 326509411 283136 34000 605 605 0 0 0 (0)

scantracker 491520 279865209 245760 0 0 0 0 0 0 (0)

appinfo 14155776 326509411 7077888 14155592 57079 460049 201485 0 201485 (0)

dns 2097152 326509411 1048576 4184 50 1380 0 0 189 (9)

lines 157-208 Cache-Type MAX-Entries Cur-Entries Cur.SZ(B) Insert-Failure Mem-Pool-Type

ssl_server_cert 16384 16384 1310720 0 l7_misc

ssl_cert_cn 25000 25000 2056592 0 l7_misc

ssl_cert_cache 1024 0 0 0 proxy

ssl_sess_cache 6000 0 0 0 proxy

proxy_exclude 1024 5 560 0 proxy

proxy_notify 8192 0 0 0 proxy

ctd_block_answer 16384 1 80 0 l7_misc

username_cache 4096 605 34000 0 cfg_name_cache

threatname_cache 4096 0 0 0 cfg_name_cache

hipname_cache 256 0 0 0 cfg_name_cache

ctd_cp 16384 0 0 0 l7_misc

ctd_driveby 4096 1065 85200 0 l7_misc

ctd_pcap 1024 0 0 0 l7_misc

ctd_sml 8192 8192 393216 0 l7_misc

ctd_url 1572864 1499956 100445544 0 l7_misc

app_tracker 65536 120 18240 0 l7_misc

threat_tracker 4096 4096 622592 0 l7_misc

scan_tracker 4096 0 0 0 scantracker

app_info 65536 57079 14155592 0 appinfo

dns_v4 10000 47 4040 0 dns

dns_v6 10000 0 0 0 dns

dns_id 1024 3 144 0 dns

tcp_mcb 8256 29 2088 0 l7_misc

- Mark as New

- Subscribe to RSS Feed

- Permalink

03-16-2018 07:07 AM

If i needed to venture a guess i'd point at this:

Packet rate : 42484/s

it looks like something is pretty chatty, could be a backup, a torrent, a file copy ,...

because your software pools and buffers all look ok, thoughput is 100mbps, 116k sessions and +- 3300 new connections being set up, I dont think this is an attack

does something unusual show up on ACC ? about 17hours ago you had a similar event that took 2+ hours

you could take a lok at the app scope in the monitor tab, for activity over 60mins + 24hours

PANgurus - Strata & Prisma Access specialist

- Mark as New

- Subscribe to RSS Feed

- Permalink

03-19-2018 05:11 AM

How do you know that we had a similar event 17hours ago??? i cant see any info about that in the output. thanks

- Mark as New

- Subscribe to RSS Feed

- Permalink

03-19-2018 05:37 AM

the 24hour table of the resource monitoring

PANgurus - Strata & Prisma Access specialist

- Mark as New

- Subscribe to RSS Feed

- Permalink

11-16-2018 10:59 PM

Can you please tell me how you find the 17th hour?

last digit 72 ------------------24 hour

74-------------------23 hour

if i go down from top to bottom

35--------------is 17 hour????????????

i am counting hoursfrom top to down and on right hand side is the most recent hour right?

Help the community: Like helpful comments and mark solutions.

- Mark as New

- Subscribe to RSS Feed

- Permalink

11-17-2018 08:54 AM

Are these hours numbered from like 1 to 24 being top most line being latest or oldest hour?

Help the community: Like helpful comments and mark solutions.

- Mark as New

- Subscribe to RSS Feed

- Permalink

11-19-2018 01:09 AM

Hi @MP18

you're looking too deep

each line represents an hour, with the first line being the current hour and the next line being the previous hour and the line below that yet an hour earlier

let me show you an example with fewer cores, which may look less messy:

admin@PA-200> show running resource-monitor hour

Resource monitoring sampling data (per hour):

CPU load (%) during last 24 hours:

core 0 1

avg max avg max

* * 1 5 <- current hour (ie 9:49 am)

* * 1 17 <- previous hour (ie 8:00 - 8:59:59)

* * 1 5 <- hour before that (ie 7:00 - 7:59:59)

* * 1 5 <- 6

* * 1 5 <- 5

* * 1 5 <- 4

* * 1 5 <- 3

* * 1 7 <- 2

* * 1 5 <- 1

* * 1 5 <- 0

* * 1 5 <- 23

* * 1 5 <- 22

* * 1 5 <- 21

* * 1 5 <- 20

* * 1 5 <- 19

* * 1 5 <- 18

* * 1 5 <- 17

* * 1 5 <- 16

* * 1 5 <- 15

* * 1 5 <- 14

* * 1 6 <- 13

* * 1 5 <- 12

* * 1 5 <- 11

* * 1 5 <- 10

^ ^ ^ ^ these are CPU average and max numbers, per core

Resource utilization (%) during last 24 hours:

session (average):

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0

PANgurus - Strata & Prisma Access specialist

- Mark as New

- Subscribe to RSS Feed

- Permalink

11-19-2018 07:27 AM

Got it Really appreicate explaining in more detail

Help the community: Like helpful comments and mark solutions.

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-06-2019 06:52 PM

I'm curious you point to the packet rate...that doesn't seem to high for a 5050, I was under the impression that they can handle upto 2,000,000 p/s ??

I'm having a 5050 that has been very taxed and with only App-ID being used to taxt the Gbps it was choking with well under the advertised 10Gps.

-Matt

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-07-2019 01:32 AM

The PA-5050 can maintain 2.000.000 simultaneous sessions, this is not the same as the packetrate

It can process up to 120.000 connections per second, the packetrate should be higher but depends on which applications are being processed

Some applications require more resources from the dataplane than others and if your load is unusually lopsided towards one application, you may see different results than advertised (there may be a performance increase or decrease depending on the load)

PANgurus - Strata & Prisma Access specialist

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-07-2019 07:29 AM - edited 02-08-2019 03:03 AM