- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

ML gets stuck at "Pending"

- LIVEcommunity

- Tools

- Expedition

- Expedition Discussions

- Re: ML gets stuck at "Pending"

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-01-2018 04:08 PM

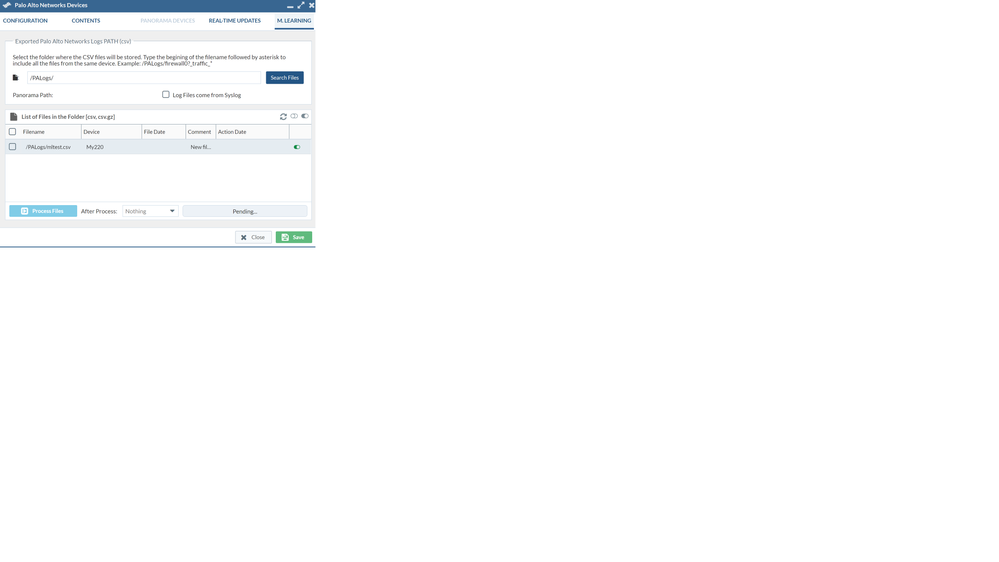

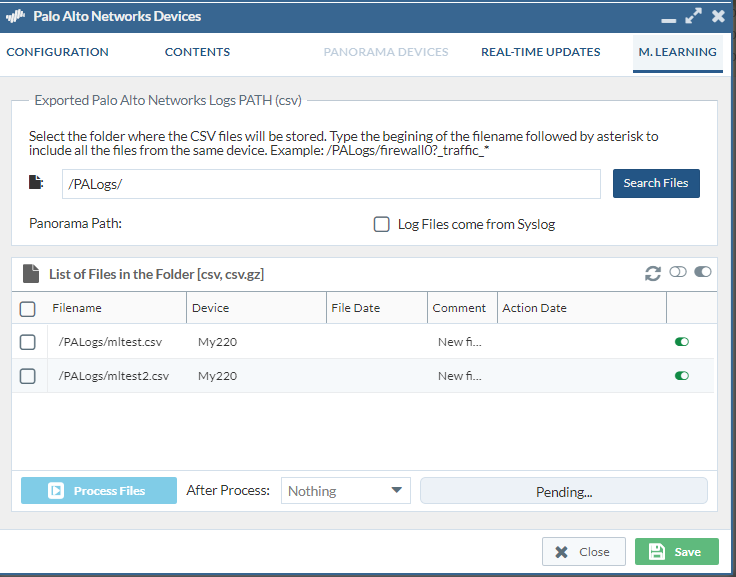

I started by running the command

| scp export log traffic start-time equal 2018/07/30@00:00:00 end-time equal 2018/07/30@23:45:00 to expedition@172.30.200.117:/PALogs/mltest.csv |

on my PA220.

root@Expedition:/PALogs# ls -l

total 64296

-rw-rw-r-- 1 expedition expedition 65830760 Aug 1 17:35 mltest.csv

drwxr-xr-x 2 www-data www-data 4096 Aug 1 17:45 sparkLocalDir

drwxr-xr-x 2 www-data www-data 4096 Aug 1 17:37 spark-warehouse

root@Expedition:/PALogs#

What am I missing here?

Accepted Solutions

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-06-2018 04:40 PM

I wanted to close out this topic with the solution Didac found this morning. He noticed that the following was set in the /etc/mysql/my.cnf file:

bind-address = 127.0.0.1

That line should be commented out instead, like this:

#bind-address = 127.0.0.1

Once that change was made everything started working again. Didac spent a lot of time with me over the phone to get this resolved. His support dedication is superb. Thank you Didac!

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-02-2018 06:26 AM

It may be that later versions provide you more detailed information.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-02-2018 07:28 AM

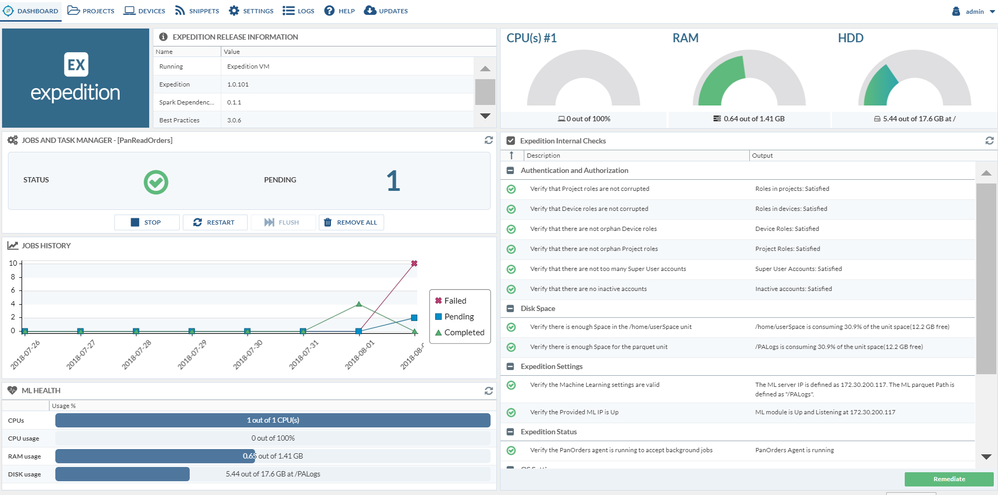

I'm running 1.0.101 and have edited the apache and php ini files to get red of the red errors that are normally on the dashboard after initial install. This is a new installation running on my VMware Workstation.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-02-2018 07:30 AM

It's has been showing this since yesterday afternoon

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-03-2018 12:58 PM

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-03-2018 02:22 PM

If so, could you send it to fwmigrate at paloaltonetworks dot com?

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-03-2018 02:30 PM

This is all I see.

root@Expedition:/# ls -l

total 97

drwxr-xr-x 2 root root 12288 May 31 03:06 bin

drwxr-xr-x 4 root root 1024 Aug 2 09:15 boot

drwxr-xr-x 19 root root 4000 Aug 3 16:27 dev

drwxr-xr-x 102 root root 4096 Aug 2 09:14 etc

drwxr-xr-x 4 root root 4096 Sep 1 2017 home

lrwxrwxrwx 1 root root 33 Aug 2 09:14 initrd.img -> boot/initrd.img-4.4.0-130-generic

lrwxrwxrwx 1 root root 33 May 31 03:07 initrd.img.old -> boot/initrd.img-4.4.0-127-generic

drwxr-xr-x 23 root root 4096 May 15 09:39 lib

drwxr-xr-x 2 root root 4096 May 15 09:19 lib64

drwx------ 2 root root 16384 Sep 1 2017 lost+found

drwxr-xr-x 3 root root 4096 Sep 1 2017 media

drwxr-xr-x 2 root root 4096 Jul 19 2016 mnt

drwxr-xr-x 3 root root 4096 May 20 02:46 opt

drwxrwxr-x+ 4 www-data www-data 4096 Aug 2 14:24 PALogs

dr-xr-xr-x 226 root root 0 Aug 3 16:27 proc

drwx------ 4 root root 4096 Aug 1 18:15 root

drwxr-xr-x 27 root root 980 Aug 3 16:28 run

drwxr-xr-x 2 root root 12288 May 31 03:06 sbin

drwxr-xr-x 2 root root 4096 May 15 09:40 snap

drwxr-xr-x 2 root root 4096 Jul 19 2016 srv

dr-xr-xr-x 13 root root 0 Aug 3 16:28 sys

drwxrwxrwt 9 root root 4096 Aug 3 16:27 tmp

drwxr-xr-x 10 root root 4096 Sep 1 2017 usr

drwxr-xr-x 14 root root 4096 Sep 1 2017 var

lrwxrwxrwx 1 root root 30 Aug 2 09:14 vmlinuz -> boot/vmlinuz-4.4.0-130-generic

lrwxrwxrwx 1 root root 30 May 31 03:07 vmlinuz.old -> boot/vmlinuz-4.4.0-127-generic

root@Expedition:/# cd /tmp

root@Expedition:/tmp# ls

systemd-private-6d5d630412964751893d41d9f04c1f39-systemd-timesyncd.service-2nvV8R vmware-root

root@Expedition:/tmp# ls -l

total 8

drwx------ 3 root root 4096 Aug 3 16:27 systemd-private-6d5d630412964751893d41d9f04c1f39-systemd-timesyncd.service-2nvV8R

drwx------ 2 root root 4096 Aug 3 16:27 vmware-root

root@Expedition:/tmp#

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-03-2018 02:46 PM

I just ran the job again and got the error file. Sending it now.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-06-2018 04:40 PM

I wanted to close out this topic with the solution Didac found this morning. He noticed that the following was set in the /etc/mysql/my.cnf file:

bind-address = 127.0.0.1

That line should be commented out instead, like this:

#bind-address = 127.0.0.1

Once that change was made everything started working again. Didac spent a lot of time with me over the phone to get this resolved. His support dedication is superb. Thank you Didac!

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-07-2018 02:52 PM

Thank you, working fine

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-19-2018 04:17 AM

I had this problem too on Expedition 1.0.7.

Similar to the solution below I hard-coded the real (non-localhost) address to the bind-address line of /etc/mysql/my.cnf.

Don't forget sudo service mysql restart.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-21-2018 04:37 AM

For me its not working, but I get the pendig at the Dashboard.

Its not working with remarked : 127.0.01 nor with the real Host IP.

I get in the /tmp/error_logCoCo log:

(/opt/Spark/spark/bin/spark-submit --class com.paloaltonetworks.tbd.LogCollectorCompacter --deploy-mode client --supervise /var/www/html/OS/spark/packages/LogCoCo-1.3.0-SNAPSHOT.jar MLServer='172.30.104.33', master='local[3]', debug='false', taskID='8', user='admin', dbUser='root', dbPass='paloalto', dbServer='172.30.104.33:3306', timeZone='Europe/Helsinki', mode='Expedition', input=0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv, output='/home/www-data/connections.parquet', tempFolder='/home/www-data'; echo /data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv; )>> "/tmp/error_logCoCo" 2>>/tmp/error_logCoCo &

---- CREATING SPARK Session:

warehouseLocation:/tmp/spark-warehouse

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/Spark/extraLibraries/slf4j-nop-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/Spark/spark-2.1.1-bin-hadoop2.7/jars/slf4j-log4j12-1.7.16.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.helpers.NOPLoggerFactory]

+--------------------+-----------+--------+--------------------+

| rowLine| fwSerial|panosver| csvpath|

+--------------------+-----------+--------+--------------------+

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

+--------------------+-----------+--------+--------------------+

8.1.0:/data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv

LogCollector&Compacter called with the following parameters:

Parameters for execution

Master[processes]:............ local[3]

User:......................... admin

debug:........................ false

Parameters for Job Connections

Task ID:...................... 8

My IP:........................ 172.30.104.33

Expedition IP:................ 172.30.104.33:3306

Time Zone:.................... Europe/Helsinki

dbUser (dbPassword):.......... root (************)

projectName:.................. demo

Parameters for Data Sources

App Categories (source):........ (Expedition)

CSV Files Path:.................0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv

Parquet output path:.......... file:///home/www-data/connections.parquet

Temporary folder:............. /home/www-data

---- AppID DB LOAD:

Application Categories loading...

DONE

Logs of format 7.1.x NOT found

Logs of format 8.0.2 NOT found

Logs of format 8.1.0-beta17 NOT found

Logs of format 8.1.0 found

Logs of format 8.1.0-beta17 NOT found

Size of trafficExtended: 50 MB

[Stage 54:> (0 + 3) / 246]Exception in thread "main" org.apache.spark.SparkException: Job aborted due to stage failure: Task 1 in stage 54.0 failed 1 times, most recent failure: Lost task 1.0 in stage 54.0 (TID 1148, localhost, executor driver): org.apache.spark.SparkException: Failed to execute user defined function(anonfun$18: (string) => bigint)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIterator.agg_doAggregateWithKeys$(Unknown Source)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIterator.processNext(Unknown Source)

at org.apache.spark.sql.execution.BufferedRowIterator.hasNext(BufferedRowIterator.java:43)

at org.apache.spark.sql.execution.WholeStageCodegenExec$$anonfun$8$$anon$1.hasNext(WholeStageCodegenExec.scala:377)

at scala.collection.Iterator$$anon$11.hasNext(Iterator.scala:408)

at org.apache.spark.shuffle.sort.BypassMergeSortShuffleWriter.write(BypassMergeSortShuffleWriter.java:126)

at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:96)

at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:53)

at org.apache.spark.scheduler.Task.run(Task.scala:99)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:322)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.NumberFormatException: For input string: "fe80::340b:b764:f9ad:a59a"

at java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.lang.Integer.parseInt(Integer.java:580)

at java.lang.Integer.parseInt(Integer.java:615)

at scala.collection.immutable.StringLike$class.toInt(StringLike.scala:272)

at scala.collection.immutable.StringOps.toInt(StringOps.scala:29)

at com.paloaltonetworks.tbd.LogCollectorCompacter$.com$paloaltonetworks$tbd$LogCollectorCompacter$$IPv4ToLong$1(LogCollectorCompacter.scala:279)

at com.paloaltonetworks.tbd.LogCollectorCompacter$$anonfun$18.apply(LogCollectorCompacter.scala:1002)

at com.paloaltonetworks.tbd.LogCollectorCompacter$$anonfun$18.apply(LogCollectorCompacter.scala:1002)

... 13 more

Driver stacktrace:

at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$failJobAndIndependentStages(DAGScheduler.scala:1435)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1423)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1422)

at scala.collection.mutable.ResizableArray$class.foreach(ResizableArray.scala:59)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:48)

at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:1422)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:802)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:802)

at scala.Option.foreach(Option.scala:257)

at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:802)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:1650)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:1605)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:1594)

at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:48)

at org.apache.spark.scheduler.DAGScheduler.runJob(DAGScheduler.scala:628)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1925)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1938)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1951)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1965)

at org.apache.spark.rdd.RDD$$anonfun$collect$1.apply(RDD.scala:936)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:112)

at org.apache.spark.rdd.RDD.withScope(RDD.scala:362)

at org.apache.spark.rdd.RDD.collect(RDD.scala:935)

at com.paloaltonetworks.tbd.LogCollectorCompacter$.main(LogCollectorCompacter.scala:1156)

at com.paloaltonetworks.tbd.LogCollectorCompacter.main(LogCollectorCompacter.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:743)

at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:187)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:212)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:126)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: org.apache.spark.SparkException: Failed to execute user defined function(anonfun$18: (string) => bigint)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIterator.agg_doAggregateWithKeys$(Unknown Source)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIterator.processNext(Unknown Source)

at org.apache.spark.sql.execution.BufferedRowIterator.hasNext(BufferedRowIterator.java:43)

at org.apache.spark.sql.execution.WholeStageCodegenExec$$anonfun$8$$anon$1.hasNext(WholeStageCodegenExec.scala:377)

at scala.collection.Iterator$$anon$11.hasNext(Iterator.scala:408)

at org.apache.spark.shuffle.sort.BypassMergeSortShuffleWriter.write(BypassMergeSortShuffleWriter.java:126)

at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:96)

at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:53)

at org.apache.spark.scheduler.Task.run(Task.scala:99)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:322)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.NumberFormatException: For input string: "fe80::340b:b764:f9ad:a59a"

at java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.lang.Integer.parseInt(Integer.java:580)

at java.lang.Integer.parseInt(Integer.java:615)

at scala.collection.immutable.StringLike$class.toInt(StringLike.scala:272)

at scala.collection.immutable.StringOps.toInt(StringOps.scala:29)

at com.paloaltonetworks.tbd.LogCollectorCompacter$.com$paloaltonetworks$tbd$LogCollectorCompacter$$IPv4ToLong$1(LogCollectorCompacter.scala:279)

at com.paloaltonetworks.tbd.LogCollectorCompacter$$anonfun$18.apply(LogCollectorCompacter.scala:1002)

at com.paloaltonetworks.tbd.LogCollectorCompacter$$anonfun$18.apply(LogCollectorCompacter.scala:1002)

... 13 more

/data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv

Any Ideas?

Gernot

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-21-2018 04:39 AM

For me its not working, but I get the pendig at the Dashboard.

Its not working with remarked : loopback-IP nor with the Host IP

I get in the /tmp/error_logCoCo log:

(/opt/Spark/spark/bin/spark-submit --class com.paloaltonetworks.tbd.LogCollectorCompacter --deploy-mode client --supervise /var/www/html/OS/spark/packages/LogCoCo-1.3.0-SNAPSHOT.jar MLServer='172.30.104.33', master='local[3]', debug='false', taskID='8', user='admin', dbUser='root', dbPass='paloalto', dbServer='172.30.104.33:3306', timeZone='Europe/Helsinki', mode='Expedition', input=0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv, output='/home/www-data/connections.parquet', tempFolder='/home/www-data'; echo /data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv; echo /data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv; )>> "/tmp/error_logCoCo" 2>>/tmp/error_logCoCo &

---- CREATING SPARK Session:

warehouseLocation:/tmp/spark-warehouse

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/Spark/extraLibraries/slf4j-nop-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/Spark/spark-2.1.1-bin-hadoop2.7/jars/slf4j-log4j12-1.7.16.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.helpers.NOPLoggerFactory]

+--------------------+-----------+--------+--------------------+

| rowLine| fwSerial|panosver| csvpath|

+--------------------+-----------+--------+--------------------+

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

|0009C100289:8.1.0...|0009C100289| 8.1.0|/data/SnifferPalo...|

+--------------------+-----------+--------+--------------------+

8.1.0:/data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv,/data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv

LogCollector&Compacter called with the following parameters:

Parameters for execution

Master[processes]:............ local[3]

User:......................... admin

debug:........................ false

Parameters for Job Connections

Task ID:...................... 8

My IP:........................ 172.30.104.33

Expedition IP:................ 172.30.104.33:3306

Time Zone:.................... Europe/Helsinki

dbUser (dbPassword):.......... root (************)

projectName:.................. demo

Parameters for Data Sources

App Categories (source):........ (Expedition)

CSV Files Path:.................0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv,0009C100289:8.1.0:/data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv

Parquet output path:.......... file:///home/www-data/connections.parquet

Temporary folder:............. /home/www-data

---- AppID DB LOAD:

Application Categories loading...

DONE

Logs of format 7.1.x NOT found

Logs of format 8.0.2 NOT found

Logs of format 8.1.0-beta17 NOT found

Logs of format 8.1.0 found

Logs of format 8.1.0-beta17 NOT found

Size of trafficExtended: 50 MB

[Stage 54:> (0 + 3) / 246]Exception in thread "main" org.apache.spark.SparkException: Job aborted due to stage failure: Task 1 in stage 54.0 failed 1 times, most recent failure: Lost task 1.0 in stage 54.0 (TID 1148, localhost, executor driver): org.apache.spark.SparkException: Failed to execute user defined function(anonfun$18: (string) => bigint)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIterator.agg_doAggregateWithKeys$(Unknown Source)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIterator.processNext(Unknown Source)

at org.apache.spark.sql.execution.BufferedRowIterator.hasNext(BufferedRowIterator.java:43)

at org.apache.spark.sql.execution.WholeStageCodegenExec$$anonfun$8$$anon$1.hasNext(WholeStageCodegenExec.scala:377)

at scala.collection.Iterator$$anon$11.hasNext(Iterator.scala:408)

at org.apache.spark.shuffle.sort.BypassMergeSortShuffleWriter.write(BypassMergeSortShuffleWriter.java:126)

at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:96)

at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:53)

at org.apache.spark.scheduler.Task.run(Task.scala:99)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:322)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.NumberFormatException: For input string: "fe80::340b:b764:f9ad:a59a"

at java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.lang.Integer.parseInt(Integer.java:580)

at java.lang.Integer.parseInt(Integer.java:615)

at scala.collection.immutable.StringLike$class.toInt(StringLike.scala:272)

at scala.collection.immutable.StringOps.toInt(StringOps.scala:29)

at com.paloaltonetworks.tbd.LogCollectorCompacter$.com$paloaltonetworks$tbd$LogCollectorCompacter$$IPv4ToLong$1(LogCollectorCompacter.scala:279)

at com.paloaltonetworks.tbd.LogCollectorCompacter$$anonfun$18.apply(LogCollectorCompacter.scala:1002)

at com.paloaltonetworks.tbd.LogCollectorCompacter$$anonfun$18.apply(LogCollectorCompacter.scala:1002)

... 13 more

Driver stacktrace:

at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$failJobAndIndependentStages(DAGScheduler.scala:1435)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1423)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$abortStage$1.apply(DAGScheduler.scala:1422)

at scala.collection.mutable.ResizableArray$class.foreach(ResizableArray.scala:59)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:48)

at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:1422)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:802)

at org.apache.spark.scheduler.DAGScheduler$$anonfun$handleTaskSetFailed$1.apply(DAGScheduler.scala:802)

at scala.Option.foreach(Option.scala:257)

at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:802)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:1650)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:1605)

at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:1594)

at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:48)

at org.apache.spark.scheduler.DAGScheduler.runJob(DAGScheduler.scala:628)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1925)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1938)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1951)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:1965)

at org.apache.spark.rdd.RDD$$anonfun$collect$1.apply(RDD.scala:936)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:112)

at org.apache.spark.rdd.RDD.withScope(RDD.scala:362)

at org.apache.spark.rdd.RDD.collect(RDD.scala:935)

at com.paloaltonetworks.tbd.LogCollectorCompacter$.main(LogCollectorCompacter.scala:1156)

at com.paloaltonetworks.tbd.LogCollectorCompacter.main(LogCollectorCompacter.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:743)

at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:187)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:212)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:126)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: org.apache.spark.SparkException: Failed to execute user defined function(anonfun$18: (string) => bigint)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIterator.agg_doAggregateWithKeys$(Unknown Source)

at org.apache.spark.sql.catalyst.expressions.GeneratedClass$GeneratedIterator.processNext(Unknown Source)

at org.apache.spark.sql.execution.BufferedRowIterator.hasNext(BufferedRowIterator.java:43)

at org.apache.spark.sql.execution.WholeStageCodegenExec$$anonfun$8$$anon$1.hasNext(WholeStageCodegenExec.scala:377)

at scala.collection.Iterator$$anon$11.hasNext(Iterator.scala:408)

at org.apache.spark.shuffle.sort.BypassMergeSortShuffleWriter.write(BypassMergeSortShuffleWriter.java:126)

at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:96)

at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:53)

at org.apache.spark.scheduler.Task.run(Task.scala:99)

at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:322)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.NumberFormatException: For input string: "fe80::340b:b764:f9ad:a59a"

at java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.lang.Integer.parseInt(Integer.java:580)

at java.lang.Integer.parseInt(Integer.java:615)

at scala.collection.immutable.StringLike$class.toInt(StringLike.scala:272)

at scala.collection.immutable.StringOps.toInt(StringOps.scala:29)

at com.paloaltonetworks.tbd.LogCollectorCompacter$.com$paloaltonetworks$tbd$LogCollectorCompacter$$IPv4ToLong$1(LogCollectorCompacter.scala:279)

at com.paloaltonetworks.tbd.LogCollectorCompacter$$anonfun$18.apply(LogCollectorCompacter.scala:1002)

at com.paloaltonetworks.tbd.LogCollectorCompacter$$anonfun$18.apply(LogCollectorCompacter.scala:1002)

... 13 more

/data/SnifferPalo_traffic_2018_11_24_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_26_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_12_03_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_27_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_28_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_29_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_23_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_22_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_25_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_21_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_12_02_last_calendar_day.csv

/data/SnifferPalo_traffic_2018_11_30_last_calendar_day.csv

Any Ideas?

Gernot

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-21-2018 04:41 AM

Thanks for the report.

The issue you are finding is that the current version of the ML package is not supporting IPv6.

We are working on the next version of the package to support IPv6 for loading and Rule Enrichment and Rule Learning.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-21-2018 04:58 AM

Ah IPv6 is the Issue?

I will try to modify the Logs and remove the IPv6 Logging entries.

Many thanks for that very quick information.

Kind Regards

Gernot

- 1 accepted solution

- 46232 Views

- 26 replies

- 3 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- Expedition not showing Panorama Device groups in API Output manager in Expedition Discussions

- Unable to Remove Unused Objects process get stuck in Expedition Discussions

- Generating Set & XML gets stuck at Generating Network Interfaces Tunnel information in Expedition Discussions

- Generate XML gets stuck in Expedition Discussions

- Expedition Stuck at "Reading Config files" in Expedition Discussions