- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

AWS VM Series Gateway Load Balancers not working

- LIVEcommunity

- Discussions

- Network Security

- VM-Series in the Public Cloud

- Re: AWS VM Series Gateway Load Balancers not working

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

AWS VM Series Gateway Load Balancers not working

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-10-2020 09:23 PM

Hi All

Has anyone else had a play with the GWLB on AWS?

I know it must be PAN-OS 10.0.2 or higher to work,

I have tested with multiple instances,

As a bump in the wire it works fine. until you apply NAT, then it doesn't work at all for any traffic that is NAT'd.

I have an open TAC for this, they are replicating the fault to work it out but surely this was all tested before it went public.

I also found overlay routing breaks traffic flow. its not documented anywhere that I could find but what I found was it processes the GENEVE traffic in the virtual router where without it, is just an in-return non routed flow.

If you've tinkered with it and actually got inbound/outbound NAT and/or overlay routing to function, please let me know what you did.

sadly the documentation just doesnt provide any decent clarity for this feature.

Also extremely disappointed they havent integrated this into version 9.1.

I am hopeful they will add it with 9.1.7 in a functional state as I am not planning to move my clients to 10.0 until the list of known issues is about 1/4 its current size.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-10-2020 11:27 PM

Hi Craig,

Thanks for your feedback.

How are you deploying the GWLB with VM-Series? Are you using any of the templates provided on our Github repo?

https://github.com/PaloAltoNetworks/AWS-GWLB-VMSeries

If you are using a CFT with an autoscale template, then it will create a NAT GW along with other components. The template also takes care of the automatic route population for any new APP VPCs.

Thanks,

Raj

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-11-2020 04:57 AM

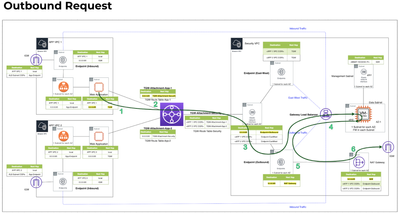

As of this time, break out routing is not supported. The traffic must stay in the Geneve tunnel. In reading this, it appears you are addressing the outbound use case. In that traffic flow, the NatGW must be used as the next hop beyond the GWLBe as depicted in this flow.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-16-2020 08:18 PM

We are using a nat gateway. outbound works just fine until you apply NAT of any sort. just trying to apply any sort of NAT to change the direction of the traffic breaks it.

so if i put a nat rule that traffic to 1.1.1.1 gets d-nat to 8.8.8.8, the traffic never exits the firewall.

key use for this was for inbound traffic, redirecting inbound traffic to the correct ALB that lives in another vpc, the traffic seems to get dropped at the firewall, even though pcaps show it *thinks* it is being forwarded on, its not.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-17-2020 09:52 AM

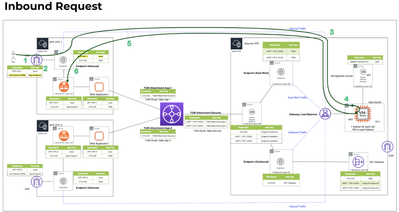

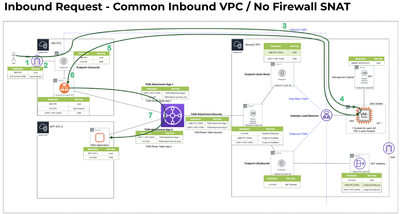

Inbound requires ingress routing to use the GWLB without SNAT. You can do that within the application vpc using a public-facing LB in front of the application.

Or if you want to have a dedicated inbound VPC, you use the same design as above but move your pool members across the TGW.

If you prefer the traditional Load Balancer sandwich design where the firewalls are pool members of the front door LB and you are going to SNAT/DNAT to the application, you would either use a dedicated set of firewalls or add new Untrust and Trust interfaces to the firewall as ETH3/4 and use those for ingress outside of the GWLB. This is necessary as the GWLB traffic must hairpin inside of the Geneve tunnel, you cannot insert flows into the tunnel from another interface.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-17-2020 02:05 PM

neither of those suit the application Im doing.

We have multiple inbound services that sit behind the firewall.

the current layout is the trust/untrust sandwich but we would prefer to move away from the NLB design as they have a capacity limitation in regards to autoscale groups.

the design plan is that we have 'anchor' network addresses for each inbound service, the traffic comes in via the IGW, steered through the geneve tunnel to the palo, at which point we apply a destination NAT to the actual application load balancer of that service (which is in a different vpc), the traffic is still supposed to egress the geneve tunnel just with a different destination address, but the palo seems to drop it (even though the palo pcap believes it is forwarded on (seen in transmit stage with correct destination IP)

on your comment re: overlay routing, why did they put the feature command in there if they havent got it working yet? /logic 😕

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-17-2020 02:13 PM

At this point, I would suggest you reach out to your Account Team to engage with one of the Consulting Engineers to discuss over zoom and whiteboard. There is only so much we can accomplish in the message board and a further understanding of your flows is warranted.

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-14-2021 01:45 PM

For those playing at home,

In further discussions with @jmeurer, AWS apply a 5 tuple hash on the traffic to ensure return path, so applying a NAT breaks the traffic flow and AWS drops the traffic.

Overlay routing is not yet functional, hopefully it will be in 10.0.4 or 10.0.5 and I can test if I can get the NAT to work for me in that aspect. I am curious if the return traffic would exit via the same geneve tunnel given theres no routes through it in that situation, only time will tell.

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-05-2021 04:10 AM - edited 02-05-2021 04:11 AM

Did this issue Fixed.

I am also facing challenges with AWS GWLB. Traffic is Sourced from Outside towards inside.

Traffic monitor is showing traffic from Outside to Outside.

Not sure why.....

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-05-2021 04:37 AM

that in depends how you setup your interfaces. when you have only one interface then is of course your traffic recognized als interzone fraffic to Outside -> Outside. when you want to split it then you have to create sub interfaces and map them to a another zone and adopt your FW VR routing.

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-05-2021 04:46 AM

Hi @tostern

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-07-2021 11:19 AM

I had a 3 interface setup working: GENEVE In/Out through eth1/1, then into eth1/2 -> NAT -> out of eth 1/3 to the ouetside.

Traffic would end up passing through the firewall twice.

On the other hand GWLB seems to break GP, so cannot run GP portal/Gateway on the outside interface.

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-08-2021 12:22 AM

My design is as per below. Let me know if any issue.

Server-1 (Outside)==>TGW==>SecurityVPC==>GWLBe==>EndPoint Service==>GWLB==>PaloAlto Outside interface (Eth1/1)==>Pa Processing==>PaloAlto Inside interface(Eth1/2)==> Server-2 (Inside).

I am not using GP instead traffic is ping/ssh. Whenever i process the traffic from Outside to Inside traffic logs saying traffic outside to outside hence not matching correct policy and not processing.

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-08-2021 08:07 AM

At this time, GWLB deployments do not support routing outside of the GENEVE interface. The traffic must hairpin back to the GWLB.

Also, there is a known issue with GP not working on a GWLB enabled firewall that will be resolved in a future release.

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-08-2021 10:05 AM

Thanks for letting me know that it's a known issue with GP, any indication on when to expect a fix?

Thanks

- 27964 Views

- 21 replies

- 1 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- global protect connection failed authentication failed !!! in GlobalProtect Discussions

- GP Login Lifetime? in GlobalProtect Discussions

- How does the Azure Virtual Network discovers that there is Palo Alto Gateway Interface in VM-Series in the Public Cloud

- Global Protect Client Crash 6.3.3 in GlobalProtect Discussions

- How to backup and restore from PA3220 to PA1420 for a Global Protect Portal & Gateway in Next-Generation Firewall Discussions