- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

Panorama log-collector

- LIVEcommunity

- Discussions

- Network Security

- Panorama Discussions

- Panorama log-collector

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-22-2021 11:02 AM

We have two panorama and newly upgraded to 10.1.3.-h1 and HA and Panorama mode.

One log-collector group and two log-collectors .

All devices are have them in prefer-list

one of log-collectors has 0% avg log/sec . is it normal ?

Accepted Solutions

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-04-2022 12:28 PM

It is not a bug ...

After restarting process at the passive panorama, it works.

debug software restart process vldmgr

Before we started process , we can see error log from vldmgr.log

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-23-2021 04:49 AM

Thank you for the post @JeffKim

If log forwarding preference is set correctly, then this is not expected behavior.

Could you do basic verification from CLI to verify all services are running and status of elastic search:

show system software status

show log-collector-es-cluster health

show log-collector detail

I would also check on one of the Firewall that is supposed to send logs to log collector to confirm log forwarding preference list and logging status:

show log-collector preference-list

show logging-status

If none of the above does not reveal any obvious issue, I would try to restart service on Panorama: debug software restart process logd

Kind Regards

Pavel

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-23-2021 07:54 AM

Thanks Pavei

But when i run cli , I see hit log at secondary PN

kim3@esca-now-mgt-pan(primary-active)> show log-collector-es-cluster health

{

"cluster_name" : "__pan_cluster__",

"status" : "yellow",

"timed_out" : false,

"number_of_nodes" : 2,

"number_of_data_nodes" : 2,

"active_primary_shards" : 312,

"active_shards" : 604,

"relocating_shards" : 0,

"initializing_shards" : 0,

"unassigned_shards" : 20,

"delayed_unassigned_shards" : 0,

"number_of_pending_tasks" : 0,

"number_of_in_flight_fetch" : 0,

"task_max_waiting_in_queue_millis" : 0,

"active_shards_percent_as_number" : 96.7948717948718

}

jkim3@lvnv-now-mgt-pan(secondary-passive)> show log-collector-es-cluster health

{

"cluster_name" : "__pan_cluster__",

"status" : "yellow",

"timed_out" : false,

"number_of_nodes" : 2,

"number_of_data_nodes" : 2,

"active_primary_shards" : 312,

"active_shards" : 604,

"relocating_shards" : 0,

"initializing_shards" : 0,

"unassigned_shards" : 20,

"delayed_unassigned_shards" : 0,

"number_of_pending_tasks" : 0,

"number_of_in_flight_fetch" : 0,

"task_max_waiting_in_queue_millis" : 0,

"active_shards_percent_as_number" : 96.7948717948718

}

jkim3@lvnv-now-mgt-pan(secondary-passive)>

jkim3@esca-now-mgt-pan(primary-active)> debug log-collector log-collection-stats show incoming-logs | match traffic

traffic:1080653607

traffic generation logcount:1080653627 blkcount:260903 addition-rate:2330(per sec)

jkim3@esca-now-mgt-pan(primary-active)>

jkim3@lvnv-now-mgt-pan(secondary-passive)> debug log-collector log-collection-stats show incoming-logs | match traffic

traffic:285020348

traffic generation logcount:285020348 blkcount:67370 addition-rate:843(per sec)

jkim3@lvnv-now-mgt-pan(secondary-passive)>

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-23-2021 08:04 AM - edited 12-23-2021 08:07 AM

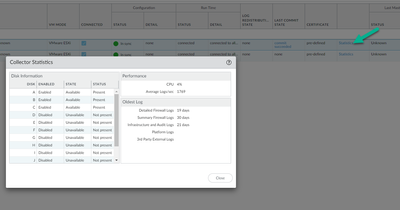

At Pri PN or Sec PN , the status of disk ( Second PN Serial 00071000xxbb) is present/unavailable .

jkim3@lvnv-now-mgt-pan(secondary-passive)> show log-collector serial-number 00071000xxaa

SearchEngine status: Active

md5sum updated at 2021/12/23 07:16:00

Certificate Status:

Certificate subject Name: 0e070ba7-7aec-4663-ab53-7a2ea571fec6

Certificate expiry at: 2022/03/17 07:54:04

Connected at: 2021/12/17 17:35:30

Custom certificate Used: no

Raid disks

DiskPair A: Enabled, Status: Present/Available, Capacity: 1651 GB

DiskPair B: Enabled, Status: Present/Available, Capacity: 1651 GB

DiskPair C: Enabled, Status: Present/Available, Capacity: 1651 GB

DiskPair 😧 Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair E: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair F: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair G: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair H: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair I: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair J: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair K: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair L: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

Log collector stats

Incoming logs = 1658/sec

Incoming blocks = 8/min

Queries executed = 0/min

Reports generated = 0/min

detailed storage = 36 days

summary storage = 36 days

infra_audit storage = 36 days

platform storage = 0 days

external storage = 0 days

Last masterkey push status: Unknown

Last masterkey push timestamp: none

jkim3@lvnv-now-mgt-pan(secondary-passive)> show log-collector serial-number 00071000xxbb

Last commit-all: commit succeeded, current ring version 3

SearchEngine status: Active

md5sum updated at ?

Certificate Status:

Certificate subject Name:

Certificate expiry at: none

Connected at: none

Custom certificate Used: no

Raid disks

DiskPair A: Enabled, Status: Present/Unavailable, Capacity: 1651 GB

DiskPair B: Enabled, Status: Present/Unavailable, Capacity: 1651 GB

DiskPair C: Enabled, Status: Present/Unavailable, Capacity: 1651 GB

DiskPair 😧 Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair E: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair F: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair G: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair H: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair I: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair J: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair K: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

DiskPair L: Disabled, Status: Not present/Unavailable, Capacity: 0 GB

Log collector stats

Incoming logs = 0/sec

Incoming blocks = 0/min

Queries executed = 0/min

Reports generated = 0/min

detailed storage = 0 days

summary storage = 0 days

infra_audit storage = 0 days

platform storage = 0 days

external storage = 0 days

Last masterkey push status: Unknown

Last masterkey push timestamp: none

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-24-2021 04:41 PM

Thank you for reply and sorry for late response @JeffKim

Since based on CLI output you provided, the status of ElasticSearch is not red and based on debug of log collector there are logs coming there seems to be no reason the logs should not appear under secondary log collector. At this point, I would generate tech-support file from log collector and open a TAC ticket.

Kind Regards

Pavel

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-28-2021 01:38 PM

Thanks Paveik,

The issue is preference-list and we have one list and all FW send log to active log-collector in preference list.

We need to create new preference-list and 2nd log-collector first and pri log-collector is 2nd .

And then we need to assign Firewall devices in 1st preference and 2nd preference for load sharing

Web GUI looks bug on code 10.1.3-h1

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-04-2022 12:28 PM

It is not a bug ...

After restarting process at the passive panorama, it works.

debug software restart process vldmgr

Before we started process , we can see error log from vldmgr.log

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-04-2022 05:36 PM

Thank you for sharing resolution @JeffKim

Would it be possible to share what errors you have seen in the vldmgr.log?

Thank you in advance

Pavel

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-04-2022 06:45 PM

We got error as below.

2022-01-04 11:27:24.878 -0800 connection failed for err 111 with vld-0-0. Will start retry 32 in 2000

2022-01-04 11:27:24.878 -0800 connection failed for err 111 with vld-1-0. Will start retry 32 in 2000

2022-01-04 11:27:24.878 -0800 connection failed for err 111 with vld-2-0. Will start retry 32 in 2000

2022-01-04 11:27:25.457 -0800 Error: _pkt_process(pkt.c:1486): vld for segnum 45 not set or not connected. dropping pkt

2022-01-04 11:27:25.457 -0800 Error: _handle_read_event(pkt.c:3543): Error processing read pkt on fd:16 cs:logd for vldmgr:vldmgr

2022-01-04 11:27:25.457 -0800 Error: vldmgr_pkt_process(pkt.c:3638): Error handling read event on fd:16 for vldmgr:vldmgr

2022-01-04 11:27:25.457 -0800 Error: _process_fd_event(pan_vld_mgr.c:2282): Error processing the request from 16 on vld: vldmgr

2022-01-04 11:27:26.878 -0800 Connection to vld-0-0 established

2022-01-04 11:27:26.878 -0800 Connection to vld-1-0 established

2022-01-04 11:27:26.878 -0800 Connection to vld-2-0 established

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-04-2022 07:26 PM

Thank you for sharing!

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-05-2022 05:30 AM

Assuming these are m600 LCs -

You'll want to upgrade to 10.1.4. Output from 'show system environmentals' is broken. It does not show fan RPMs nor does it show the power supplies are inserted.

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-05-2022 08:16 AM

We are using Panorama VA

one Collector group and two collectors .

- 1 accepted solution

- 23305 Views

- 11 replies

- 0 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- EDL Performance and Refresh Handling in Panorama in Next-Generation Firewall Discussions

- Rename security zones on panorama and push it to the firewalls in Panorama Discussions

- resolve hostname in logs now working in panorama in Panorama Discussions

- How to push Bulk IOC list in file format to panorama firewall? in Panorama Discussions

- IoT Devices in Panorama in Panorama Discussions