- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

Asymetric Bandwidth On IPSEC VPNs and MPLS Tunnels

- LIVEcommunity

- Discussions

- General Topics

- Asymetric Bandwidth On IPSEC VPNs and MPLS Tunnels

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-12-2017 02:50 PM - edited 12-12-2017 02:52 PM

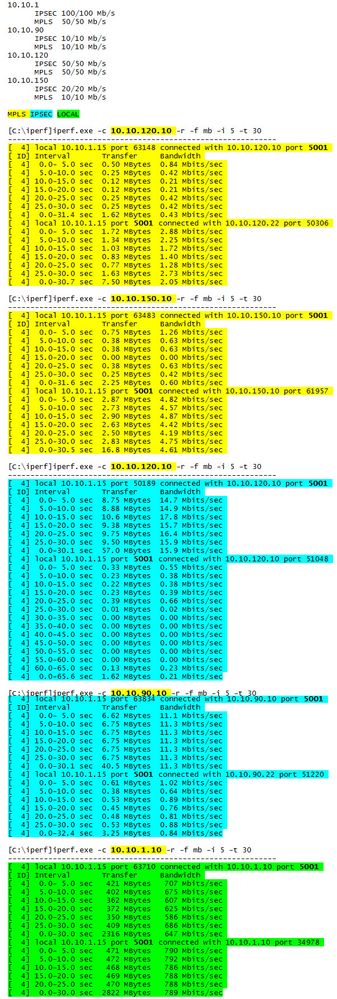

We are having unidirectional problems with our site to site circuits bandwidth. I am not sure if this is a PAN problem or a problem with the providers. It’s interesting that IPERF shows slowness to the remote site on MPLS but on IPSEC the slowness is to the main site (10.10.1). Traceroutes show the traffic is symmetrical on all tests.

All connections are business fiber symmetrical connections. We are using PA-3020s at all sites. All interfaces are configured L3 access to the ISPs equipment. IPSEC is site to site PAN VPN. MPLS is routed site to site connections with PBFs, using automatic fail over to IPSEC if the other sides firewall is not pingable.

Accepted Solutions

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-19-2017 05:22 AM

Do you have the ability to run the test across the MPLS circuit directly device to device eliminating all switches and firewalls in the path?

And similarly on the internet connections, connect directly to the internet circuit via public ip address and run the test over the internet without IPSEC.

These will valid the circuits first, then add back into the path one at a time other devices.

For common causes of this look for:

physical errors on connected ports

half duplex or other mismatches on port connections

IPSEC errors

Do a packet capture and look at what the actual traffic shows on both sides

ACE PanOS 6; ACE PanOS 7; ASE 3.0; PSE 7.0 Foundations & Associate in Platform; Cyber Security; Data Center

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-21-2017 01:02 PM

@OtakarKlier, yes and no. We have open tickets with PAN and the carriers involved.

We have 12 sites and have over the last year replaced all the previous firewalls with PAN 3020s. During this same timeframe we have been performing circuit upgrades on both MPLS and IPSEC (internet), we still have one MPLS and one IPSEC to convert actually.

ISP upgrades (all MPLS and some IPSEC) have included Ciena switches on copper ports (CLink has been replacing most of their existing equipment and not doing bandwidth upgrades on existing). We first turned all the sites up on the IPSEC and towards the end have been moving the primary internal connections to the MPLS (due to the ratio of sites on the new vs old firewalls and one MPLS circuit). I have dealt with Cienas before but always on fiber, never copper.

Yes, the majority of the connections have been running 100/Half. Setting the MPLS ports to 100/Full with all the new Cienas has fixed most of the problems (there are some sites we are sure are carrier problems now). The difficulty on this was the upgrade disparity of both firewall and bandwidth, along with the sporadic reporting/duration from users. We have not been in the CLI checking physical ports in months as early on negotiation seemed fine. Nothing in the GUI or our reporting tools showed interface problems and there were no errors or excessive drops on ports.

We saw some circuit problems before we did the PAN upgrades but most showed up after the PAN upgrades. Unfortunately the metrics that are monitored don't show high bandwidth limitations (things like ping for example). We had to rely on the end users complaints and provided timeframes. Usage per site was also a factor on whether users noticed or not. Reports of this user but not that user having problems also made us look at internal networking. It wasn't till recently we started reporting on backup timeframes and iperf logging, this also correlated with Citrix scanning and printing (scanning from site to Citrix while printing was from Citrix to site). On IPSEC scanning was bad (inconsistently) and on MPLS printing was bad (inconsistently). Our primary site is on a Ciena for MPLS but on a Cisco for IPSEC, this site never saw IPSEC problems.

An annoying side note. Because we checked port configurations early on and did not see negotiation problems we did not go back to it because there were no other L1 interface problems. All other conversations (ISPs, PAN support, etc) related to this have not come back to this either (CLink didn't even bring it up during the upgrades). When I checked the ports based on @pulukas suggestion I didn't initially check the negotiation, I checked the errors/drops.

In the GUI you can see the interface status, either on the dashboard (if you have the Interfaces widget added) or under networking -> interfaces -> ethernet. In both cases mouse over the interface and a popup will show the stats, I have looked for this since we figured out the problem (interfaces will be Green if connected or Red if not, there is no Yellow/Orange for "UP but problems").

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-12-2017 02:51 PM

<additional configuration notes to be added upon request>

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-19-2017 05:22 AM

Do you have the ability to run the test across the MPLS circuit directly device to device eliminating all switches and firewalls in the path?

And similarly on the internet connections, connect directly to the internet circuit via public ip address and run the test over the internet without IPSEC.

These will valid the circuits first, then add back into the path one at a time other devices.

For common causes of this look for:

physical errors on connected ports

half duplex or other mismatches on port connections

IPSEC errors

Do a packet capture and look at what the actual traffic shows on both sides

ACE PanOS 6; ACE PanOS 7; ASE 3.0; PSE 7.0 Foundations & Associate in Platform; Cyber Security; Data Center

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-19-2017 02:42 PM

Hello,

I have to agree with @pulukas, probably a speed/duplex mismatch somewhere. I had a circuit put in and drove us crazy for weeks. Used a little utility to send huge (gigs) files across the link that discovered an issue with the through put. After looking at all the interfaces event he carrier looking at theirs, it was a colo transceiver that was set to auto instead of hard set to 100/full.

Good luck.

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-19-2017 05:01 PM

@pulukas & @OtakarKlier thank you for the replies.

We used IPERF to test across both the IPSEC and MPLS connections. Unfortunately no, we don't have the ability to remove all equipment from MPLS to test. Our main site is MPLS one to many so this would take down all our connections. If the Palo Alto has a firewall to firewall tool (like Adtrans on their 8044M & TA5k series) I would definately try that but I haven't found anything like that.

None of our ports are showing physical errors.

I will have to check the port speed matching.

No IPSEC errors.

We have taken packet captures and Palo Alto is looking into them.

Brian

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-20-2017 01:50 PM

I would suggest opening cases with all ISP's and CoLo's involved. This way you can have the,m check the interfaces you are not able to see. In my case it was a fiber to ethernet transceiver that was the root cause and it was found by the ISP by looking at their routers and seeing CRC errors.

Cheers!

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-21-2017 01:02 PM

@OtakarKlier, yes and no. We have open tickets with PAN and the carriers involved.

We have 12 sites and have over the last year replaced all the previous firewalls with PAN 3020s. During this same timeframe we have been performing circuit upgrades on both MPLS and IPSEC (internet), we still have one MPLS and one IPSEC to convert actually.

ISP upgrades (all MPLS and some IPSEC) have included Ciena switches on copper ports (CLink has been replacing most of their existing equipment and not doing bandwidth upgrades on existing). We first turned all the sites up on the IPSEC and towards the end have been moving the primary internal connections to the MPLS (due to the ratio of sites on the new vs old firewalls and one MPLS circuit). I have dealt with Cienas before but always on fiber, never copper.

Yes, the majority of the connections have been running 100/Half. Setting the MPLS ports to 100/Full with all the new Cienas has fixed most of the problems (there are some sites we are sure are carrier problems now). The difficulty on this was the upgrade disparity of both firewall and bandwidth, along with the sporadic reporting/duration from users. We have not been in the CLI checking physical ports in months as early on negotiation seemed fine. Nothing in the GUI or our reporting tools showed interface problems and there were no errors or excessive drops on ports.

We saw some circuit problems before we did the PAN upgrades but most showed up after the PAN upgrades. Unfortunately the metrics that are monitored don't show high bandwidth limitations (things like ping for example). We had to rely on the end users complaints and provided timeframes. Usage per site was also a factor on whether users noticed or not. Reports of this user but not that user having problems also made us look at internal networking. It wasn't till recently we started reporting on backup timeframes and iperf logging, this also correlated with Citrix scanning and printing (scanning from site to Citrix while printing was from Citrix to site). On IPSEC scanning was bad (inconsistently) and on MPLS printing was bad (inconsistently). Our primary site is on a Ciena for MPLS but on a Cisco for IPSEC, this site never saw IPSEC problems.

An annoying side note. Because we checked port configurations early on and did not see negotiation problems we did not go back to it because there were no other L1 interface problems. All other conversations (ISPs, PAN support, etc) related to this have not come back to this either (CLink didn't even bring it up during the upgrades). When I checked the ports based on @pulukas suggestion I didn't initially check the negotiation, I checked the errors/drops.

In the GUI you can see the interface status, either on the dashboard (if you have the Interfaces widget added) or under networking -> interfaces -> ethernet. In both cases mouse over the interface and a popup will show the stats, I have looked for this since we figured out the problem (interfaces will be Green if connected or Red if not, there is no Yellow/Orange for "UP but problems").

- Mark as New

- Subscribe to RSS Feed

- Permalink

12-21-2017 05:27 PM

Glad things are moving in the right direction.

With 100m interfaces there never was a universally accepted standard for auto-neg. I've found it almost never works correctly cross different vendors so setting explict 100 full settings on both sides is generally required to avoid a 100 half link.

Agree that some color coding for error conditions like half-duplex would be helpful in the gui.

ACE PanOS 6; ACE PanOS 7; ASE 3.0; PSE 7.0 Foundations & Associate in Platform; Cyber Security; Data Center

- 2 accepted solutions

- 7800 Views

- 7 replies

- 0 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- How to create bandwidth capping of 20 mbps in vpn tunnel on Palo Alto Firewalls in Next-Generation Firewall Discussions

- SD-WAN Traffic Control from the Hub Side in Next-Generation Firewall Discussions

- Dual IPSEC tunnels load balanced between two endpoints in General Topics

- bundle gre tunnels and distribute internet traffic across them in Next-Generation Firewall Discussions

- VPN Bandwidth Load Balancing in General Topics