- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

Odd behavior around ISP Failover with Static Route Path Monitoring

- LIVEcommunity

- Discussions

- General Topics

- Odd behavior around ISP Failover with Static Route Path Monitoring

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

Odd behavior around ISP Failover with Static Route Path Monitoring

- Mark as New

- Subscribe to RSS Feed

- Permalink

01-28-2020 01:48 PM - edited 01-28-2020 03:13 PM

Hi,

I had an unexpected situation occur recently with regards to failover behavior on static route path monitoring. We have 3 ISPs, and this past weekend 2 of them went down at different times (hooray). For the purposes of this post, I will be talking about one of them.

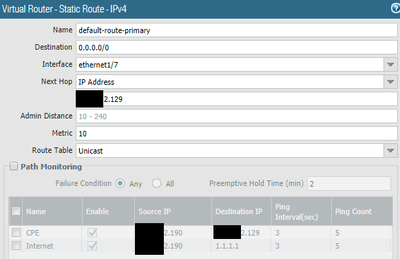

Interestingly, the path-monitoring worked when the failure event occurred - and the routes were able to be failed over to the secondary (and eventually the tertiary) ISP. However, when the ISPs came back up, the routes did not recover. I'm specifically looking at the behavior for the default route out as defined with a static route for destination 0.0.0.0/0.

In my experiences with our Internet providers, sometimes the ISP CPE device can go down, and sometimes the service upstream could be down. To account for this, I selected 2 options in the path-monitoring box for destinations to check. One being the Edge CPE IP (which can be pinged from both inside or outside the network under normal/specific circumstances) and the other being a public DNS for 1.1.1.1.

The IP on the PA interface connecting to the ISP is on the same subnet as the IP on the ISP CPE.

When going through testing/troubleshooting, I could see inbound layer-2 traffic from the edge CPE all the way to the PA interface. However, I saw very little, maybe even no (did not specifically note, unfortunately) outbound traffic from that very same PA interface. Pings from that interface to the CPE IP failed, while I could ping it across the Internet from another PA interface used for a different ISP. Felt like very weird behavior.

Preemptive hold time set to what I believe is the default of 2 minutes

Theories I have based on guessing and reading around the Internet:

1. Since one of the paths monitored are not within the subnet, it could not be determined to be in anything but a failed state. If this is the case, what is my best option for determining if the ISP upstream from the connecting device is down?

2. Something strange going on because the next-hop IP is the same as one of the Destination IP's being monitored. I don't expect this to be the case, because if so then what is my option for determining if the connected upstream device is connected, yet unavailable for some reason?

3. ...something to do with the interface on the PA having IPs without an explicit subnet defined? Thus, it couldn't know that the CPE IP was in it's subnet to check? If this seems valid, then I double-down on the question raised in point #1. Edit (#7? 8?): As I come back for yet another edit this point #3 is seeming more and more valid. Both of the ISPs that failed, and did not recover successfully do not have subnets defined on the IPs associated with their interfaces, while the ISP that didn't fail - or maybe did at some point, but recovered so smoothly that I never saw it - has a subnet attached to the IPs of the interface on the PA device. Hmm.

Any comments or insights would be immensely appreciated. Thanks!

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-04-2020 12:39 AM

Thanks for your post. I am seeing the exact same behavior both in 8.1.12 and now 9.0.6 on a PA-220. My workaround, not sure why this works, was to add another static route on my primary ISP interface for 1.1.1.1/32 with a next hop of the ISP gateway. When I test this, the route monitor seems to be up now. Without this additional route, it stays in a down state even when the interface is back up and functioning.

I've tested this before, used in production with clients, and I feel like this is something that was broken and has remained broken for some time now somewhere beginning the in 8.1.x code and above.

- Mark as New

- Subscribe to RSS Feed

- Permalink

02-04-2020 01:17 PM

In my case, this was a result of a Zone Protection Profile applied to my "untrust' zone which included both ISP interfaces. It was dropping the ICMP replies due to strict IP check. I disabled this and now everything works as expected.

- Mark as New

- Subscribe to RSS Feed

- Permalink

05-22-2025 12:22 PM

Hi,

i'm having this exact issu on a PA-3420 (11.1.2-h3).

To get it working, i used the same workaround as @LouisScaringella and configured a static route for ips used for path monitoring. Otherwise, path monitoring are going through the active static route (default route in my case). From what i understand, path monitoring uses the routing table even if your Palo interface is in the same network as the monitored IP in path monitoring. Seems to be some kind of bug.

I also had to allow traffic between the palo alto and the gateway used for path monitoring (even though they are on the same subnet). As you said, it's due to Zone Protection.

Today my route failed as expected, but never failed back as the path monitoring checks failed. And i don't quite understand why.

- Mark as New

- Subscribe to RSS Feed

- Permalink

05-22-2025 01:41 PM

So, i did some research and from what i understand, the "Spoofed IP address" and mostly "Strict IP Address Check" options are causing the path monitoring to continue to fail :

Once your path monitoring fails and your main route is deleted, path monitoring icmp requests are blocked by one of these zone protection options because the checked IPs are no longer routable through your main ISP, or because the main interface IP is not on the interface used by your second route. Something like this.

It is still a very strange behavior for something that seems to be easy to configure.

Seems kind of weird to be force to chose between path monitoring or security...

- 7995 Views

- 4 replies

- 0 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- Cortex XDR Pro – Does it scan USB devices upon insertion? in Cortex XDR Discussions

- HA failover on Acitve Passive concerns in Next-Generation Firewall Discussions

- traffic disruption on routing change in Next-Generation Firewall Discussions

- Tuning Panorama HA Timers to Stop False HA1 Alerts over MPLS in Panorama Discussions

- "More runtime stats" not loading when Advanced routing is enabled in Next-Generation Firewall Discussions