- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

CN-Series Part 2: Why Did We Build the CN-Series?

- LIVEcommunity

- Products

- Network Security

- Next-Generation Firewalls

- CN-Series

- CN-Series Articles

- CN-Series Part 2: Why Did We Build the CN-Series?

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

on 06-29-2021 01:27 PM - edited on 08-05-2021 08:20 AM by jforsythe

Cloud-native adoption is on the rise.

If you haven’t already started thinking about the impact of containers on your network security (NetSec) posture, now’s a good time to start. Gartner predicts that most organizations will be running multiple containerized applications in production in the next three years.

Organic growth of container environments is creating the following challenges for NetSec teams:

Challenges for NetSec Team

1. Lack of visibility and control

You can’t protect what you can’t see. NetSec teams need the same level of visibility and control over their cloud-native environments over their physical and virtual environments. While cloud-native security tools provide several fantastic things to protect containerized applications, the one thing they do not offer is comprehensive Layer 7 network security and threat prevention.

2. Inconsistent tools and management

Our network security customers are already overwhelmed with too many tools. The last thing you need is additional point solutions to solve this problem. Furthermore, attempting to manage network security across multiple tools and management consoles often leads to gaps in policies and increases the overall risks.

3. Lack of automation and scalability

Whatever controls Network Security does end up deploying need to be highly automated and scalable as part of a DevOps workflow, or the DevOps and Application teams won’t allow them to have used them in the first place. A security tool cannot slow down the speed of development.

At this point, you may be asking yourself, “But what about virtual firewalls? Can’t they be deployed at the edge of the Kubernetes cluster (environment for visibility and control)?”

You’d be right; we could technically deploy virtual firewalls or even hardware firewalls at the edge of container environments. But the fact that these firewalls are deployed outside the K8s cluster means their visibility and control is limited in some crucial ways.

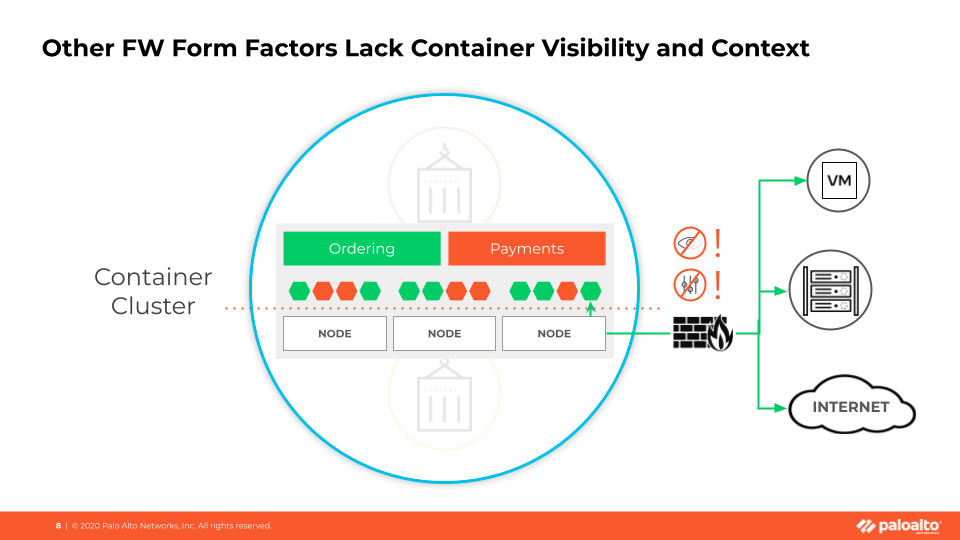

Figure 1: NetSec Challenges for managing K8s Clusters

The most significant gap is that a firewall outside a cluster cannot determine the specific traffic source in the container environment. To understand this, we need to understand a bit of how Kubernetes and containers work.

In the container cluster shown in Figure 1, you can see two applications deployed: Ordering and Payments. The little hexagons represent “pods.” You can think of Pods as components of an application. The red pods are all components of the Payments app; the green pods are part of the ordering app.

A Node is a worker machine, a VM, or a physical machine that contains services to run the pods.

Now, due to the way Kubernetes works, pods from different applications can leverage the same node to run.

This how we arrive at the visibility problem for a firewall located outside of the cluster. Each pod’s IP address gets translated to the Node IP Address. A firewall outside the cluster can only see the node IP address, not the individual pod’s IP address.

It means that the firewall can’t resolve whether the traffic originated from the Payments app or the Ordering app. The more applications deployed in the cluster, the more complicated this gets.

I should mention that this visibility challenge isn’t the only limitation of other firewall form factors. A firewall located outside the K8s cluster also lacks critical context about the container environment itself.

For instance, the firewall has no concept of namespaces, which is a logical construct that helps delineate between applications in Kubernetes environments. The namespaces are represented in this diagram (figure 1) by the red and green labels. A network security engineer could write a policy that explicitly allows traffic from the Payments application namespace to the database and back in an ideal world. The namespace is the crucial boundary where most organizations want to enforce Layer 7 network security and advanced threat protection, so a firewall must pull this context from the environment and use it.

And that’s why we have created the industry’s first CN-Series container firewall.