- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

DTRH: Scripting Anything and Reaping Data

- LIVEcommunity

- Discussions

- Security Operations

- Cortex XDR Discussions

- Re: DTRH: Scripting Anything and Reaping Data

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

DTRH: Scripting Anything and Reaping Data

- Mark as New

- Subscribe to RSS Feed

- Permalink

05-26-2021 05:02 PM - last edited on 04-18-2024 12:00 PM by emgarcia

DTRH: Scripting Anything and Reaping Data

Overview

Customers are always asking for additional capabilities in the product and often times these feature request may come during a POC where having that capability can be the deciding factor in winning the deal. The feature request to delivered into the product can be a long cycle, often time you may be able to achieve the desired functionality through the scripting capabilities that exist today.

In this DTRH, we are going to cover a real request from a customer in a POC and walk you through the basics of script creation and showing how we use the data from the script and make it usable in the Cortex Data Lake.

Let’s start with the customer use case. The customer already had some monitoring capabilities on the endpoint that would dump any PowerShell commands to a log file. The wanted the capability to ingest the contents of that log file into the Cortex Data Lake with the additional goal of being able to search through the content via XQL. Now if this were going to be a feature request it would most likely take considerable engineering effort to get it all done but let’s see how we can accomplish this in 25 lines of python code.

Creating the Script

There are 2 main components for creating usable scripts in XDR.

- The Script – The set of instructions that will run on the endpoint

- The UI – Setup in the UI to name, get arguments and other configuration

We will start taking a look at the script itself but there are some areas related to the UI that will dictate how we develop this script. Here is a look at the script in full:

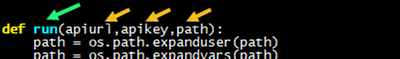

First the entry point into function of script is set with ‘def run’, the entry point will be chosen and set when we add it to the UI. Then you can have an arbitrary number of variables that are populated in the UI and passed to the script. In this case here we have 3: apiurl, apikey, path.

Now its best practice to have some error checking in your code, possibly checking the values of the variables and/or checking for possible exceptions in running. In this case, since we are dumping the contents of the file, one of the checks is to make sure the file exists and throw an error if it does not.

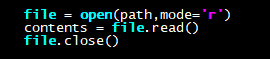

A script will then have a number of lines of code to complete the desired action(s) on the endpoint. For this script we are going to read in the contents of the file that we want to eventually insert into the Cortex Data Lake

For many scripts you may not need to even return any data back to the data lake so we could potentially return from the script at this point. In this case we are going to use another feature in the XDR console, the HTTP Event Collector, to pass data over that is stored in the data lake. This is fairly straight forward but will require some additional setup in the UI to enable the collector and requires massaging the data that we are going to pass in as well.

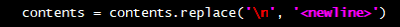

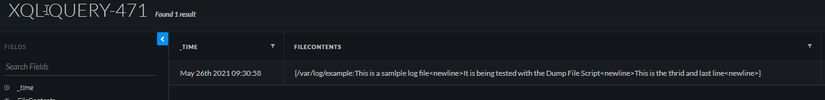

One of the main items to consider when using the HTTP Event Collector is that when we post data to it, every single newline is a break and will create separate events in the XQL. This could be the desired usage but in our case we are reading in an entire file that we would like to stay as a whole single event so we have to deal with these newlines. Here is the code for this:

What this does is replace every actual newline (\n) with the word ‘<newline>’. This will then basically turn the file into one long string with ‘<newline>’ as a separator. Not only does this create the separator and allow you to upload as a single event but through XQL you could potentially reverse the process in the UI and substitute ‘<newline>’ back to something else.

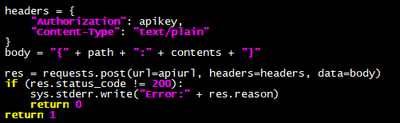

The python requests library is a great addition to the language and allows for much easier web based transaction. The following code is something that would be valuable/reusable in other scripts that want to store data from the script into the data lake.

Authorization is done through the API key which is created by the HTTP Collector and set through the UI. We will show both of these steps later but for now its just important to note that an API key (apikey) was created and it is passed in to the script. In addition, the URL for the API (apiurl) that we need to send the request to is handled the same way.

The body builds the actual content of data going into data lake as you can see in the post request which contains the API URL, the needed headers and the body. There is a check for a 200 response code from the server to validate the data was delivered without issue. Refer to the Requests Python Library for more information but knowing these few lines of code can make any script push data into the Cortex Data Lake.

Connecting the Script To The UI

Now that we have the completed script, we will configure the UI to use it and fill in some of the pieces we discussed previously in more detail. Let’s walk through this step by step.

- Navigate to: Response -> Action Center and select Available Script Library in the left-hand side pane

- Select

in the top right corner - Drag the script of select Browse to import the script

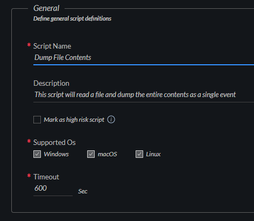

-

Fill in the General script definitions- The Script Name will be used to run on the end point, so use a name that makes sense.

- Define the supported OS’s. Since Python is cross platform, many scripts can run across all platforms if it makes sense.

- A timeout can be set for the maximum number of seconds a script should run

- Fill in the Input section

- Run by entry point will let you choose from a drop down the function you wish to called to start the script.

- The variables you defined in the script will show up here and you can select the expected type. Some values can be number or Booleans but, in our case, all 3 values will be String

- I left the Output as Auto Detect for this script. This will probably work in most cases and since we are sending our data for searching in the data lake, this output will most likely only be used in error cases.

- Once finished hit the

button in the bottom right-hand corner.

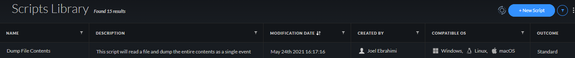

Your script is now created and will show up in the script library with the information populated

Custom Collector for The Data Lake

This next step is around setting up the server to accept data from the script through a Custom Collector. It should be noted that for this script the goal was to get the data into the data lake. This step would not be needed if the data did not need to be ingested into the data lake.

Navigate to

-> Configuration and select Data Collection->Customer Collectors in the left had side bar- Click to add a new HTTP Collector

Typically, the custom collector is used to support ingesting data from products from other vendors but we can take advantage of it but it will mean some of the field names do not match up exactly as we fill in the collection details

- Name – Collector Name Seen in UI

- Vendor – In this case PAN was used

- Product – Used the name of the script action

- Compression – our data is uncompressed

- Log Format – Sending Tex

! It is important to note that the vendor and product name will be the name of dataset used by XQL. The overall dataset name will look like:<vendor>_<product>_raw

Click Save & Generate Token- Copy the Token

! The API Token will pop up and must be copied. Once this window is closed there is no way to access this token anymore and if not saved a new collector would have to be created.

3. Once the collector is created there is a clickable link to copy the API URL that will be used to post the data to. Copy the link.

Usage

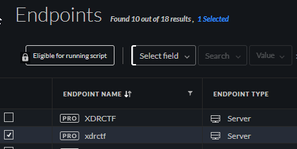

At this point everything has been setup and we can run the script. These steps will walk through a sample run. We have the API URL and API Key from the steps above, the two options that need to be added are what file we want to dump and on which endpoints should we dump this file from.

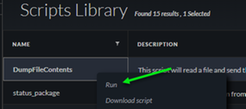

Navigate to Response->Action Center and select Available Scripts Library from the left hand side navigation- Right click on the DumpFileContents script and select Run

- This will load the script and present you with the variables that you wish to pass into the script

- Path – This is the FULL PATH to the file you want the contents sent to the datalake.

- Apiurl – This is the full URL to the HTTP Collector we created in the Custom Collector steps

- Apikey – This is the key we copied when we created the HTTP Collector

5. Click Run in the bottom right corned to execute the script on selected endpoints

6. In the Action Center click on All Actions to see the progress of the execution of the script. Once it has run on all endpoints you will see the Status if updated as completed.

Results

Now that the script has run, the results will be stored in the Cortex Data Lake and we can query those results through XQL.

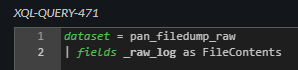

- Navigate to Investigation -> Query Builder and select XQL Search

- In the XQL query window we a going to select the dataset where the data was stored in.

! Remember the dataset name will follow the format <vendor>_<product>_raw. These values where set when we create the HTTP Collector

- The contents of the file are stored in the _raw field. We can limit this field and rename it for easier access

- The results how up in the query window, this is a sample 3 line file and you can see where the <newline> was substituted for the actual newline (\n) as previously discussed in this doc.

Tips/Tricks/Hacks

One thing I learned in running this script over and over is that it is tedious to have to enter the API URL and API Key everytime when these values do not change. Really only the Path variable is something that you should have to populate each run. To achieve this result, you can hard code the APIKEY and APIURL into the script. This does put these credentials in the clear on the file system but they are only useful for being able to post to the HTTP Collector, but it is still something you should consider.

By adding the apikey and apiurl here in the script, we also omit having those variables passed into the run function as seen below.

Conclusion

In only 25 lines of code, we were able to achieve new capabilities of the product and have the data stored in the Cortex Data Lake for searching. When a customer/prospect asks for something outside the current features it is worth looking at scripting to see if this can still be completed.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-05-2022 07:16 AM

This requires a Pro Pre TB license?

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-05-2022 07:24 AM

just excuting scripts per-se just requires a pro per endpoint license. Please check the following doc to see also other requirements as agent version, permissions on the hub, etc.

Another matter is what you want to do with the scripts, for example depending on the license you have you might be able to ingest 3rd party logs or other logs apart from the endpoint logs or even the enhanced data collection.... and for that you might need the Pro Per TB. So please keep in mind the whole picture of what you really want to achieve

KR,

Luis

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-05-2022 07:34 AM

Yes i should have been more specific, creating, ingesting, and storing the data collected from the http event collector requires per tb?

- 9535 Views

- 5 replies

- 9 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- Need help on this XSOAR Weird behavior on preprocessing scripts in Cortex XSOAR Discussions

- Result empty after using json2html script in Cortex XSOAR Discussions

- Advanced Authentication Cortex API in Cortex XDR Discussions

- xsoar Custom script widget in Cortex XSOAR Discussions

- XSOAR SLA Script in Cortex XSOAR Discussions