- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

Script failed to run: Timeout Error: Docker code script failed due to timeout, consider changing timeout value for this automation, (2604) (2603)

- LIVEcommunity

- Discussions

- Security Operations

- Cortex XSOAR Discussions

- Script failed to run: Timeout Error: Docker code script failed due to timeout, consider changing timeout value for this automation, (2604) (2603)

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

Script failed to run: Timeout Error: Docker code script failed due to timeout, consider changing timeout value for this automation, (2604) (2603)

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 03:00 AM

We have a playbook task that sends a query to run on Splunk using the SplunkPy but it keeps failing and returning the following error

#22: Splunk Search Query

Command:

!splunk-search query="index= test blah blah" earliest_time="1666679348" latest_time="1666852148" batch_limit="25000" update_context="true" interval_in_seconds="30"

Reason

Error from SplunkPy is : Script failed to run: Timeout Error: Docker code script failed due to timeout, consider changing timeout value for this automation, (2604) (2603)

I checked the corresponding query on Splunk's end and noticed it took 11 minutes to run. Following that I lookedup the https://xsoar.pan.dev/docs/reference/integrations/splunk-py doc page to find out timeout setting on the Splunk instance in XSOAR and the only one I could find was the enrichment timeout, the default for which was 5 minutes. So after changed the setting to 15minutes, the task splunk-search(SplunkPy) still kept failing due to timeout. I am suspecting I am probably changing timeout setting at the wrong place. Since the suggestion in the error message is to change timeout on automation I checked the automation splunk-search(SplunkPy) but couldn't find the timeout setting.

Can someone please assist with this ? Thanks!

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 05:27 AM - edited 10-26-2022 05:27 AM

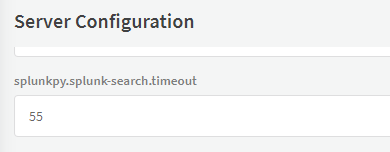

I believe the timeout value that you need to set is going to be a Server Configuration. The first configuration option under the Integrations section in the docs is "<integration_name>.<command_name>.timeout". So for the command that you are running you will need to set a Server Configuration with a key of "SplunkPy.splunk-search.timeout" and a value of 15 (assuming you want the timeout to be 15 minutes).

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 05:43 AM - edited 10-26-2022 05:44 AM

Thanks for your reply @tyler_bailey

so as an example should the parameter be this assuming the integration name is SplunkPy_ABC and value 15?

SplunkPy_ABC.splunk-search.timeout

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 05:47 AM

The parameter should be ""SplunkPy.splunk-search.timeout". The example provided by the docs says that the first portion should be the "integration name". In your example, "SplunkPy_ABC" would be the instance name which unfortunately won't work. This also means that this timeout value will be used for ALL instances of the SplunkPy integration on the XSOAR server that you configure this on. I am not aware of an option to configure the timeout for a specific instance of an integration.

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 05:54 AM

Thanks @tyler_bailey for clarifying that. I have applied that config and testing now. Will let you know how it goes.

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 06:09 AM

@tyler_bailey unfortunately, it still didn't work. after applying the setting SplunkPy.splunk-search.timeout to 15 and saving the config, I attempted to re-run the automation but got the same result.

Do I need to restart the XSOAR instance or do anything else to make it work ?

Can you please share more troubleshooting steps ?

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 06:23 AM

I just tested and I did not need to restart the service. If the server is configured for debug logs then you can check the log file at /var/log/demisto/server.log (or download the log bundle) and search for the SplunkPy command. It should indicate what the timeout is for the command in the logs.

Log from my lab:

2022-10-26 09:20:51.7649 debug Running SplunkPy_instance_1_SplunkPy_splunk-search. Timeout: 55m0s (source: /builds/GOPATH/src/code.pan.run/xsoar/server/services/automation/dockercoderunner.go:336)Server Configuration:

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 06:35 AM

This is what I got which indicates its set to 15 minutes and the Splunk search took 12 mins.

2022-10-26 13:21:25.5782 debug Waiting for runner request for unnested script [SplunkPy_AWS_ES_SplunkPy_splunk-search], with script timeout [15m0s] (source: /builds/GOPATH/src/code.pan.run/xsoar/server/services/automation/dockercoderunner.go:417)

Any ideas why still the automation might have failed ?

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-26-2022 06:42 AM

If the splunk search is taking an excessive amount of time, then I would assume that it is returning quite a few records that XSOAR will then need to process. It could be that there is not enough time left after the Splunk search returns for XSOAR to finish running the automation. Can you try bumping the timeout up to 25 minutes?

- 13477 Views

- 8 replies

- 0 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- playbook completely stuck on a simple condition in Cortex XSOAR Discussions

- HealthCheckServerConfiguration fails with TypeError on XSOAR Cloud v8.13.0 in Cortex XSOAR Discussions

- Updating Cortex Agent by MDM in Cortex XDR Discussions

- XDR Automation Rules not triggering Playbook execution in Cortex XDR Discussions

- XSIAM Playbook in Cortex XSIAM Discussions