- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

OSPF Adjacency Issues

- LIVEcommunity

- Discussions

- General Topics

- OSPF Adjacency Issues

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

OSPF Adjacency Issues

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-12-2013 02:16 PM

We've got a Cisco 7301 routers that forms OSPF adjacencies with an HA pair of 5020 firewalls. Recently I swapped this router out with a different router with the same IPs but different configs to test a new WAN connection. OSPF forms up just fine with the new router. After testing concluded and swapping back to the old router OSPF freaks out. The adjacencies get stuck in EXSTART. Cisco also says that this is an MTU mismatch condition, not true in my case.

Failing the firewalls over did not clear this up, tried twice. Rebooting them did the trick. After the reboot and a failover the adjacency was just fine.

Aug 11 00:15:14: %OSPF-5-ADJCHG: Process 200, Nbr 10.16.0.12 on GigabitEthernet0/0.200 from EXSTART to DOWN, Neighbor Down: Too many retransmissions

Aug 11 00:16:04: %OSPF-5-ADJCHG: Process 200, Nbr 10.16.1.20 on GigabitEthernet0/0.200 from DOWN to DOWN, Neighbor Down: Ignore timer expired

Aug 11 00:17:14: %OSPF-5-ADJCHG: Process 200, Nbr 10.16.0.12 on GigabitEthernet0/0.200 from EXSTART to DOWN, Neighbor Down: Too many retransmissions

Wondering if anyone else has seen this type of issue, or at least has any suggestions on how to get the adjacency to form without having to reboot.

- Labels:

-

Networking

-

Troubleshooting

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-12-2013 02:25 PM

what software version are you on

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-12-2013 02:40 PM

Do you have jumbo frames enabled. There was an issue which was seen on release 4.1.3 which got fixed in 4.1.7.

Bug 40409: Palo Alto Networks firewall not able to setup an OSPF link when using P2P to a Cisco router with jumbo-frames enabled, but broadcast mode does work. Issue with to P2P mode only, which has been fixed.

Another issue was seen in release 4.1.7 which has been fixed in 4.1.13 and 5.0.4

Bug 45687: When HA fails over from the active device to the passive device, it took more than a couple of minutes to re-establish the OSPF adjacency when the OSPF database was large. This occurred in rare situations and was due to the new active device sending redundant Database Description (DD) packets. If the neighbor OSPF router cannot handle the duplicate OSPF DD packets, the OSPF database exchange can be aborted multiple times. This issue has been resolved with this release such that the redundant DD packets are not sent.

I am not sure if you are running into any of the above issue.

Thanks

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-13-2013 06:13 AM

Just verify what the link types were configured on the cisco and the firewall. Its recommended that if using the Ethernet cables to connect to the cisco router, select the link type as "broadcast", and not "p2p" or "p2mp"

Plus, its always recommended to use graceful restart when deploying OSPF in a cluster. The feature, “graceful-restart” for OSPF is currently not supported by the PAN-FW. Since “graceful-restart” is not supported for OSPF, the routes will not be retained in the FIB once an OSPF neighbor goes down. Moreover, since the routes have been purged, traffic reliant upon these routes will be incorrectly forward out the default route or dropped.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-13-2013 11:56 AM

Can you make sure to see if there isn't a clean up/deny all/same zone deny rule at the bottom.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-14-2013 07:22 AM

Thanks for the posts so far.

There are no deny's at the bottom of the rule base (nor do I see blocks from the router or firewall when trying to establish).

We also are not using graceful restart, and the PA interfaces are set for broadcast.

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-14-2013 07:26 AM

We have to simulate the issue again and look at the "routed" logs on all the 3 firewalls ( PANFW cluster and the Cisco router ) to investigate the root cause.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09-17-2013 02:24 PM

I am running into the same issue on a A/P HA set of PA-2020's uplinked to HP L3 switches. In my case rather than reboot I run 'debug routing reset'

At that point the adjacency re-establishes and all is well. One thing I did notice is following the routed reset an additional log is created indicating 2-way communication with peer followed by OSPF full adjacency log. I do not get the 2-way log following HA events.

- Mark as New

- Subscribe to RSS Feed

- Permalink

09-17-2013 10:11 PM

Hello,

Even if the MTU seems to be the same on both devices, you probably have a MTU mismatch (what's on you see in the log).

On the Cisco device, you can add the following command under the "faulty" OSPF interface:

ip ospf mtu-ignore

This command should be also present on the PA device, except that PA doesn't support this feature !

REM: For PA team, please add the support for this feature on your device.

As I can see you're using sub-interface on the PA. So the MTU will not match the physical interface of your cisco device...

PS: If you are using subinterface on the Cisco device, MTU should match

Regards,

HA

CCIE#13029 (R&S, Security)

- Mark as New

- Subscribe to RSS Feed

- Permalink

11-27-2013 11:56 PM

Hi,

Because we met the case yesterday night, you may find out that your ospf packets are dropped by the Palo Alto (have a look in your traffic logs). In that case, you should explicit a policy rule to authorize ospf traffic.

Hope it helps even if i'm sure you've found a solution since august ![]() !

!

- Mark as New

- Subscribe to RSS Feed

- Permalink

08-31-2020 06:46 AM

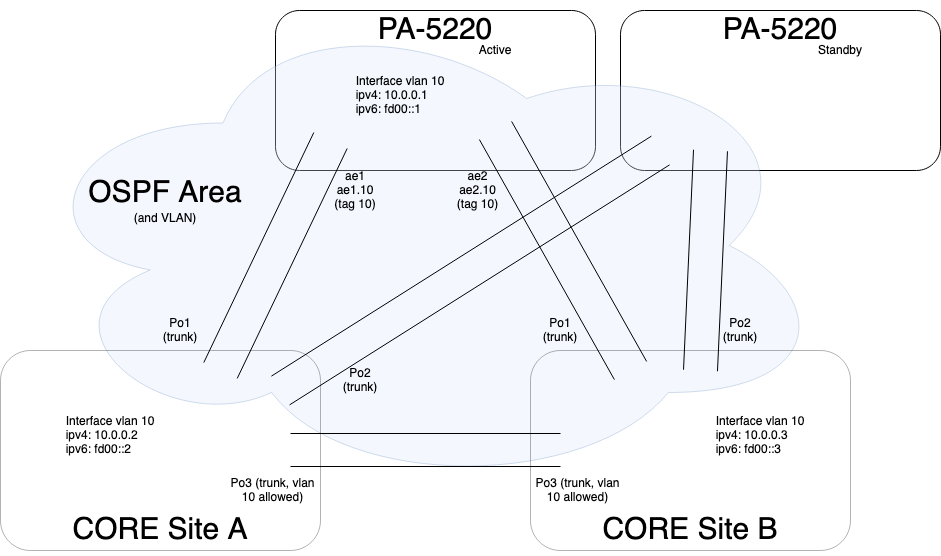

Sorry if I bring up such an old topic, but I am encountering a similar problem. I have two PA5220 (HA active/standby pair) and 4 Cisco C3850 switch pairs (4x2-way VSSs). PanOS = 9.0.9-h1, Cisco IOS 16.9.4. The entire setup is dual-stack IPv4/IPv6 and I am using OSPF for IPv4 and OSPFv3 for IPv6, due to PA limitation on dual-stack OSPFv3. I am attaching a diagram with a sample configuration. The core switches host a total of 9 VRFs, each with its own uplink, and all uplinks are transported on the same Po/Ae trunks. Each VRF pair (core A, core B) has its own Area (normal), with the firewall is the designated router (DR). VRF OSPF processes have their priority set to 0, so they won't take part in the election. My failover process is not the "standard" one (i.e. make device inactive), I'd rather lower the standby fw priority and let it preempt the active.

Now, if I force a failover, CoreA does everything right. Core B encounters this very same error: Neighbor Down: Too many retransmissions and Neighbor Down: Ignore timer expired. I can fix it by disabling/re-enabling CoreB's interface vlans, one at a time, as if they had some kind of "bottleneck" problems (we are talking about 2x10Gbit links, 282 IPv4 routes). OSPF traffic is allowed intra-zone (OSPF Area = firewall Zone = 1 firewall interface vlan + 2 core interface vlan = a bunch of networks on the cores)

I removed the mtu-ignore command on Cisco side (but I might add it back), and all OSPF routers have graceful restart enabled.

I have two questions:

1) is there a way I can avoid these errors? am I doing something wrong?

2) could LLDP being enabled on both the firewall(s) and the switches interfere in all of this, by enabling a "higher level" negotiation between core and firewall, and disabling a "virtual mac address" failover mechanism which would avoid me the entire neighborship calculation?

- Mark as New

- Subscribe to RSS Feed

- Permalink

04-01-2021 03:14 AM

On my setup, this problem was (probably) caused by PAN-154899 bug. Upgrading from 9.1.6 to 9.1.8 finally made the issue disappear.

- Mark as New

- Subscribe to RSS Feed

- Permalink

04-01-2021 01:56 PM

Hello,

My recent OSPF issues came about when some network engineers sent my traffic down a WAN link with different MTU's. Might be worth a look.

Regards,

- Mark as New

- Subscribe to RSS Feed

- Permalink

04-04-2021 01:20 AM - edited 04-04-2021 01:21 AM

The firewall was (and still is) directly connected to its L3 peers, which I manage as well. I've tried the MTU fix, but it did not help. Reducing the amount of exchanged information (splitting areas and using NSSAs) helped a bit, making the issue less frequent, but it still occurred from time to time. Upgrade to 9.1.8 resulted in the first two consecutive forced failovers without any issue at all.

- 23728 Views

- 14 replies

- 0 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- Asymmetric Routing - Palo Edge Firewall Active/Passive to Nexus Core in General Topics

- OSPF Adjacency Fails Post Upgrade - needed new rule in General Topics

- ospf neighbour adjacency is flapping continuously in Next-Generation Firewall Discussions

- PA-445 on PAN-OS 11.1.2-h3 in Next-Generation Firewall Discussions

- OSPF issue betwwen PA VM-100 and Cisco 4431 in Next-Generation Firewall Discussions