- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

Separate Multivalued fields in XQL

- LIVEcommunity

- Discussions

- Security Operations

- Cortex XDR Discussions

- Separate Multivalued fields in XQL

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

Separate Multivalued fields in XQL

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-30-2023 07:31 AM

Hi Guys,

I need some help.

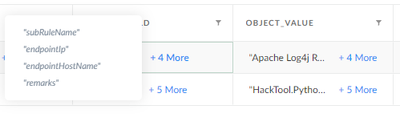

I have two fields with multiple values and I need to separate there values into separates lines.

My fields are like these:

My goal is to have 1 line with each of the results:

field = value

I tried using the arrayexpand command, but it ends up duplicating all rows of all values.

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-30-2023 08:46 AM

Hi @WilliamValente

We may need the full XQL to understand the cascading stages more, but you can try the below options and let me know if any works for you:

1- If the values are duplicate in the field, you can limit the output of the arrayexpand stage by using the limit switch as to one output for example , something like this

| arrayexpand Object_Values limit 1

2- The second option here (but will show the values separated in one line)is to use the “arraystring” function, so something like this (where we use comma for example to separate the values):

| alter hostname = arraystring (Object_Values, ", ")

I hope that helps and shed some light!

Please feel free to mark this as a solution if it helps resolve the request

Thanks

Z

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-30-2023 09:21 AM

Hi Zarnous,

Thanks for your help.

I tried your query, but It is not the result that I want.

The command arrayexpand with limit 1, return only the first value.

The command arraystring just concatenate the values with ",". I need each line with field = value.

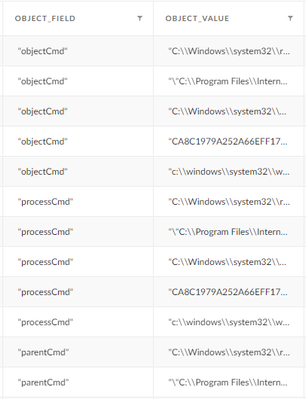

I tried the command arryexpand without limit, they duplicate the value, like this:

My XQL is:

dataset = trend

| alter alert_name = json_extract(_alert_data, "$.alert_name"),

priority_score = json_extract(_alert_data, "$.trendmicrovisiononexdrpriorityscore"),

alert_description = json_extract(_alert_data, "$.alert_description"),

investigation_status = json_extract(_alert_data, "$.trendmicrovisiononexdrinvestigationstatus"),

impact_scope = regextract(impactScope, "\"entityValue\\":([^,]+)"),

impact_scope_name = regextract(impactScope, "\"name\\":([^,]+)"),

ips = regextract(impactScope, "\"ips\\":([^,]+)"),

mitretechniqueid = regextract(matchedrules, "\"mitreTechniqueIds\\":([^,]+)"),

object_field = regextract(indicators, "\"field\\":([^,]+)"),

object_value = regextract(indicators, "\"value\\":([^,]+)")

| arrayexpand object_field

| arrayexpand object_value

| fields _time, severity, alert_name, priority_score, investigation_status, mitretechniqueid, impact_scope_name, description, ips, object_field, object_value, id, workbenchLink

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-30-2023 10:29 AM

Thank you for sharing the query, unfortunately i cant replicate it in my tenant since you are using a custom dataset that i dont have, but looking at the query logic, and specially the fields

object_field = regextract(indicators, "\"field\\":([^,]+)"),

object_value = regextract(indicators, "\"value\\":([^,]+)")

I see they are extracted in JSON Like format and i also see that it is possible to have the same object field as associated with one or more object value, i can see that as an expected query result , hence seeing multiple values matching same field, otherwise if you try to dedup the filed you will loose lots of the values associated with it.

Please correct me if i misunderstood the use case here!

Thanks

Z

- 3129 Views

- 3 replies

- 0 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- Cortex XQL "Let" replacement in Cortex XDR Discussions

- Cortex XDR PoC: Monitoring Malicious Chrome Extensions in Cortex XDR Discussions

- Cortex XDR PoC Lab ft. CVE-2021-3560 in Cortex XDR Discussions

- DTRH: CIS Benchmarking - 3rd Party Data Ingestion | Data Parsing | Widgets & Dashboards in Cortex XDR Discussions