- Access exclusive content

- Connect with peers

- Share your expertise

- Find support resources

Click Preferences to customize your cookie settings.

Unlock your full community experience!

Aggregate Ethernet Trunked Traffic in a VWire

- LIVEcommunity

- Discussions

- General Topics

- Aggregate Ethernet Trunked Traffic in a VWire

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

Aggregate Ethernet Trunked Traffic in a VWire

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-17-2015 07:54 AM

Hi Team,

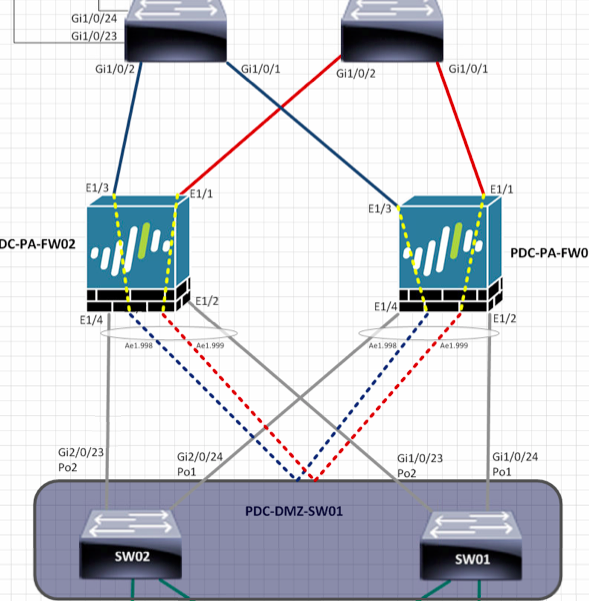

I was wondering if the below is acheivable. I plan to deploy vwires for this setup. Upstream switch's are Cisco switch's and same with downstream. (downstream switch's are stacked switch's - so logically one switch) The red is indicating one VLAN, like wise blue. We are planning to create an aggregate ethernet with sub-interfaces and have a vwire map from a physical interface to a sub interface. The downstream Cisco switch's will be trunking vlans to the Palo Alto. i.e. vlan red and vlan blue.

I want to know where to specify the VLAN tag and whether or not I have to put the parent ae interface in to a zone and vwire.

Thank you

Bilal

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-17-2015 08:06 AM

vwire is like a tube - it has 2 endpoints. If traffic goes in from one end it has to come out from other end.

So you can add only 2 interfaces to single vwire.

You should think about setting up those interfaces into Layer 2 mode.

There you can have more than 2 and can play around with vlan tags.

Palo Alto Networks certified from 2011

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-17-2015 10:13 AM - edited 10-17-2015 11:51 AM

Hi Raido, thank you for your reply. I will be adding two interfaces to a single vwire. Maybe i didnt explain properly.

vwire 1 - E1/1 mapped to ae1.999

vwire 2 - E1/3 mapped to ae1.998

My question is, since ae interface is trunk, where do I specify the tag (I assume sub interface and not the actual vwire), and do I have to put the parent interface (ae1) part of a zone and to a "parent vwire"

I've tried to get clues from this article below, but there is no actual comprehensive document from Palo Alto with this design.

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-17-2015 11:46 AM

Even though it's possible to create ae (aggregate links) in vwire links, the simplest straighforward way to configure what you need it's just negotiate tha aggregation links between you upstream and downstream switches, if they using LACP (layer 2) the vwire should just forward this packets and the AE will negotiate correctly between the switches.

Now in the PA configuration you only need to configure your links as single vwires and mirror the configuration between them.

The funny part is the asymetric traffic, you can mitigate some portion in the switches in the case they support symmetric LAG hashing (so that individual flows are "pinned" to a single virtual-wire) to avoid overload your HA3 link. Also be careful and use the same zones in the mirrored configuration: if you use "external-vlan2" for the vlan 2 in physical link eth1, use the same zone for the same vlan in the eth2.

Hope it helps,

Regards,

Gerardo.

- Mark as New

- Subscribe to RSS Feed

- Permalink

10-17-2015 12:27 PM

Hi Gerardo. The problem with this is we cannot do straightforward way, which I know for sure works i.e. dedicating two separate links for vwire. The upstream switch's are providing one link per Palo Alto FW, red and blue are different services. Downstream we have a switch stack. Rather than dedicating one link (down to one switch) we'd rather have this split physically to different switch, but logically the same switch. This is where we want to do LACP aggregation. (due to cabling restraints).

If we were to do it straight forward way, i would have to double up on links both upstream and downstream, and to me it just does not make any sense to do it like this.

I have no worries with asymetric traffic. I have tested this, and mac address between the switching infrastructure are only learnt via the active FW. The passive node does not pass traffic, but physical interface remains up.

- 5917 Views

- 4 replies

- 0 Likes

Show your appreciation!

Click Accept as Solution to acknowledge that the answer to your question has been provided.

The button appears next to the replies on topics you’ve started. The member who gave the solution and all future visitors to this topic will appreciate it!

These simple actions take just seconds of your time, but go a long way in showing appreciation for community members and the LIVEcommunity as a whole!

The LIVEcommunity thanks you for your participation!

- PA-450R ethernet port in Panorama Discussions

- GloablProtect + Explicit Proxy blocks WSL traffic. in Prisma Access Discussions

- Max number of units (aeX.Y subinterfaces) supported under a single AE interface? in Next-Generation Firewall Discussions

- Palo Alto QOS configuration question in Next-Generation Firewall Discussions

- Single interface failing LACP negotiation after PAN-OS update in Next-Generation Firewall Discussions